Fifty-one percent of enterprises now run AI agents in production, yet only one in five has a governance model mature enough to support them. That gap between deployment velocity and operational readiness is the defining challenge of the current generation of agent tooling. Teams ship agents that work in demos, then discover that production is a fundamentally different environment: one where context windows overflow, tool calls fail silently, and a single hallucinated action can cascade through downstream systems.

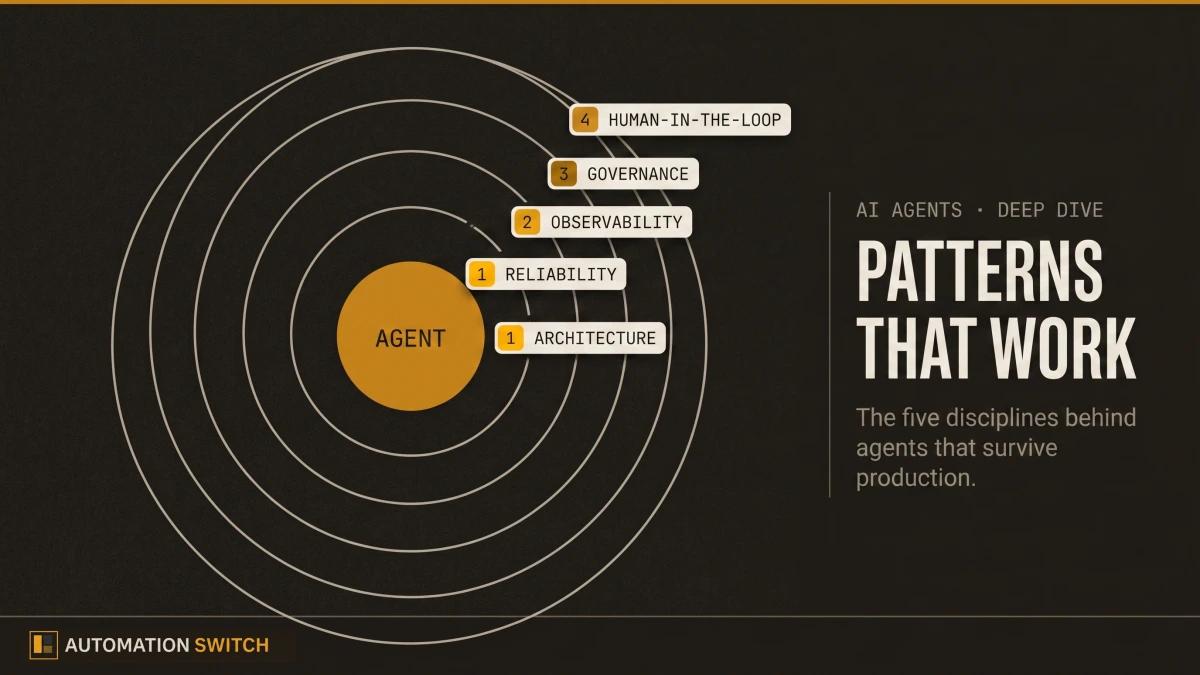

This article covers what works. Drawing on research from Anthropic, Stanford, Google Cloud, and Microsoft, alongside real production incidents, it maps the architectural patterns, reliability strategies, observability practices, and governance frameworks that separate agents that survive production from those that collapse under it.

Yet only 21% have a mature governance model.

1. Start Simple: Anthropic's Complexity Ladder

The strongest signal from Anthropic's research on building effective agents is a warning against premature complexity. Their field data shows that the most successful production deployments use simple, composable patterns and reach for multi-agent orchestration only when a single agent demonstrably hits its limits. Anthropic identifies six agentic patterns arranged on a complexity ladder, and their guidance is unambiguous: start at the bottom.

The distinction between workflows and agents matters for production planning. Workflows are systems where LLMs and tools follow predefined code paths. Agents are systems where LLMs dynamically direct their own processes and tool usage. Workflows offer predictability; agents offer adaptability. Most production systems benefit from workflows, with agent-level autonomy reserved for tasks that genuinely require dynamic decision-making.

Pattern 1: Augmented LLM

The foundation of every agentic system. A single LLM enhanced with retrieval, tool access, and memory. Most production use cases, including summarization, classification, and structured extraction, are fully served at this level. Adding complexity beyond it should require a demonstrated need.

Pattern 2: Prompt Chaining

A sequence of LLM calls where each step processes the output of the previous one, with optional validation gates between steps. Ideal for tasks that decompose naturally into fixed sequences: generate, then review, then format. Each step can be tested and debugged independently.

Pattern 3: Routing

A classifier directs inputs to specialized handlers based on type or intent. Customer support systems use this pattern to route billing questions to a billing agent and technical questions to a support agent. The router itself is typically a lightweight LLM call or a traditional classifier.

Pattern 4: Parallelization

Multiple LLM calls run simultaneously, either processing different inputs (sectioning) or generating independent outputs that are aggregated (voting). Useful for tasks where latency matters and subtasks are independent: analyzing multiple documents, generating candidate responses for selection, or running parallel evaluation criteria.

Pattern 5: Orchestrator-Workers

A central LLM dynamically breaks tasks into subtasks and delegates them to worker LLMs. This is the first pattern where the controlling LLM makes runtime decisions about task decomposition, making it genuinely agentic. Appropriate for complex tasks where the subtask structure varies by input, such as multi-file code refactoring or research synthesis across diverse source types.

Pattern 6: Evaluator-Optimizer

One LLM generates output while another evaluates it against defined criteria, creating a feedback loop that iterates until quality thresholds are met. Effective when clear evaluation criteria exist and iterative refinement produces measurably better results: code generation with test validation, translation with quality scoring, or content generation with style compliance checks.

The most successful implementations use simple, composable patterns rather than complex frameworks. Consider starting with the simplest solution possible and only increasing complexity when needed.

| Criteria | Augmented LLM | Prompt Chaining | Routing | Parallelization | Orchestrator-Workers | Evaluator-Optimizer |

|---|---|---|---|---|---|---|

| Complexity | Lowest | Low | Medium | Medium | High | Highest |

| Autonomy | None | None | Minimal | Minimal | High | High |

| Predictability | Highest | High | High | High | Medium | Medium |

| Best for | Single-step tasks | Fixed sequences | Intent-based dispatch | Independent subtasks | Dynamic decomposition | Iterative refinement |

| Production risk | Lowest | Low | Low | Medium | High | High |

For scored reviews of the frameworks that implement these patterns, browse the Agent Frameworks Directory.

2. Context Engineering: The Production Edge

Anthropic's context engineering research reframes prompt engineering as a systems problem. In production, the quality of an agent's output depends less on how cleverly you phrase a prompt and more on how effectively you populate the context window with the right information at the right time. Context engineering is the discipline of building dynamic systems that provide the right information in the right format at the right time to the model.

Tool Design Principles

Tools are the primary interface between an agent and external systems. Their design directly affects reliability, latency, and token efficiency:

- Format tool descriptions to match how the model will invoke them. Descriptions should include parameter types, expected return shapes, and failure modes.

- Plan for the model to make mistakes. Build validation into tool inputs and outputs. Return structured errors that the model can reason about.

- Minimize the surface area. Each tool should do one thing well. A "search and summarize" tool should be two tools: one for search, one for summarization.

- Test tools empirically. Run tool descriptions through the model and verify that it selects and parameterizes them correctly across diverse inputs.

Managing the Context Window

Context window overflow is one of the most common production failure modes. Strategies that work:

- Summarize early and often. Replace verbose intermediate results with concise summaries before they accumulate.

- Use retrieval selectively. Pull only the most relevant documents into context rather than flooding the window with everything that might be useful.

- Implement sliding windows. For long-running tasks, maintain a fixed-size recent history and archive older context to retrieval storage.

- Set hard token budgets. Define maximum token allocations for each section of context (system prompt, tool results, conversation history) and enforce them programmatically.

Multi-Agent Context Isolation

When multiple agents collaborate, context isolation prevents cross-contamination. Each agent should operate with its own context window, receiving only the information relevant to its task. The orchestrator manages information flow between agents, filtering and summarizing outputs before passing them downstream. This pattern reduces hallucination risk and makes individual agent behavior more predictable and testable.

3. Designing for Resilience

Agent reliability compounds inversely across steps. A pipeline where each step succeeds 99% of the time delivers only 90.4% end-to-end reliability at ten steps. At 95% per step, the same pipeline drops to 59.9%. This mathematical reality makes resilience engineering the difference between a demo and a product.

At 95% per step, the same pipeline drops to 59.9%.

Six Breakage Patterns

Production agents fail in predictable ways. Understanding these patterns lets teams design defenses before they encounter them:

Infinite loops. An agent retries a failing action indefinitely, burning tokens and compute. Defense: implement iteration caps, timeout gates, and cost circuit breakers.

Context window overflow. Accumulated tool outputs and conversation history exceed the model's context window, causing truncation or degraded reasoning. Defense: summarize intermediate results, use sliding windows, and enforce token budgets per context section.

Tool call cascades. A single tool failure triggers a chain of compensating actions that compound the error. Defense: validate tool outputs before acting on them, implement rollback mechanisms, and use dead-letter queues for unrecoverable failures.

Hallucinated tool names. The model invents tool names or parameters that do not exist. Defense: validate all tool calls against the registered tool schema before execution. Return structured error messages when validation fails.

Ambiguity amplification. Vague user inputs produce vague plans that generate vague tool calls. Each step amplifies the original ambiguity. Defense: add clarification gates early in the pipeline. Ask the user before executing uncertain plans.

State drift. Long-running agents gradually lose track of their original objective, pursuing subtasks that diverge from the goal. Defense: periodically re-anchor the agent to the original objective. Compare current actions against the stated goal at fixed intervals.

Real incidents illustrate these patterns at scale. Google's Antigravity agent deleted production Cloud Run services in an infinite retry loop. Replit's coding agent accumulated technical debt faster than it resolved it, creating self-referential fix cycles. Amazon Kiro's spec-driven agent generated 2,800 lines of specification for a feature that required 400 lines of code, then struggled to implement its own plan. Each incident maps to one or more of these six patterns.

4. Observability: Seeing Inside the Agent

Traditional application monitoring tracks request latency, error rates, and throughput. Agent observability requires tracking reasoning quality, tool call effectiveness, and task completion accuracy. The five pillars of agent observability address each dimension.

Five Pillars of Agent Observability

- Reasoning traces: capture the full chain of thought, action, and observation at each step. This is the agent equivalent of a stack trace: when something goes wrong, the reasoning trace tells you why the agent made the decision it made.

- Tool call analytics: track success rates, latency, and error distributions per tool. Identify which tools fail most often, which are called unnecessarily, and which return results the model consistently misinterprets.

- Token consumption: monitor token usage per task, per step, and per agent. Set alerts for anomalous consumption that indicates infinite loops or context window bloat.

- Task completion metrics: measure whether agents actually complete their assigned tasks, how many steps they take, and how often they require human intervention. Track completion rates over time to catch regression.

- Drift detection: compare agent behavior across runs to detect when output quality degrades, reasoning patterns shift, or tool usage changes. Model updates, prompt changes, and data drift can all cause silent regression.

OpenTelemetry for Agents

OpenTelemetry provides the instrumentation backbone for agent observability. The semantic conventions for generative AI define standard span attributes for LLM calls, tool invocations, and agent lifecycle events. Tracing frameworks like Phoenix, LangSmith, and Arize integrate with OpenTelemetry to provide agent-specific visualization and analysis. The key principle: instrument at the operation level (each LLM call, each tool invocation), then aggregate at the task level.

Metrics That Matter

Four metrics separate production-grade agent monitoring from basic logging:

- Task completion rate: percentage of assigned tasks completed without human intervention.

- Step efficiency: ratio of steps taken to minimum steps required. High ratios indicate reasoning inefficiency.

- Tool call success rate: percentage of tool invocations that return valid results. Low rates indicate tool design problems or model-tool misalignment.

- Time to completion: end-to-end latency from task assignment to task completion. Track percentiles, not averages, to catch tail latency from retry loops.

5. Governance: Four Dimensions

Agent governance extends traditional AI governance into four dimensions that reflect the unique characteristics of autonomous systems.

Dimension 1: Identity and Access

Every agent requires a verifiable identity, scoped permissions, and audit-ready credential management. Agents should authenticate with the same rigor as human users: unique service accounts, least-privilege access policies, and credential rotation. The question "who authorized this agent to take this action" must always have a clear, auditable answer.

Dimension 2: Behavioral Boundaries

Define what agents can and cannot do through explicit contracts: allowed tools, permitted actions per tool, maximum token budgets, timeout limits, and escalation triggers. Contracts should be declarative (expressed in configuration, validated at runtime) rather than implicit (embedded in prompts, enforced by hope).

Dimension 3: Audit and Compliance

Every agent action must produce an immutable audit trail: what was requested, what the agent planned, what it executed, what the outcome was, and who (or what) approved it. Audit trails serve two purposes: incident investigation (what went wrong) and compliance verification (can we prove the agent operated within its boundaries).

Dimension 4: Lifecycle Management

Agents require versioned deployment, canary rollout, rollback capability, and retirement procedures. Treat agent deployments with the same operational rigor as microservice deployments: blue-green or canary releases, health checks, and automated rollback on error rate thresholds.

The Three-Layer Governance Model

Microsoft's agent governance research proposes three layers that map well to enterprise adoption:

- Layer 1, Organizational: policies, standards, and risk frameworks that apply to all agents across the organization.

- Layer 2, Platform: infrastructure-level controls including identity management, permission enforcement, audit logging, and monitoring. This is the layer where governance becomes automated and enforceable.

- Layer 3, Agent: per-agent configuration including behavioral contracts, tool permissions, escalation rules, and performance thresholds.

Microsoft Governance Toolkit

Microsoft's Agent Governance Toolkit provides a reference implementation for the three-layer model, including templates for agent registration, permission scoping, audit trail configuration, and compliance reporting. The toolkit is framework-agnostic and designed to integrate with existing enterprise identity and access management systems.

6. Human-in-the-Loop: Calibrated Autonomy

The goal is calibrated autonomy: agents operate independently within well-defined boundaries, and humans intervene only when the agent reaches the edge of its competence or authority. Three models define the spectrum.

Model 1: Approval Gates

The agent proposes actions and waits for human approval before executing. This is the safest model and the right starting point for high-stakes workflows: financial transactions, customer communications, infrastructure changes. The cost is latency; every action blocks on human response time.

Model 2: Exception-Based Escalation

The agent operates autonomously for routine actions and escalates to humans only when it encounters uncertainty, errors, or actions that exceed its defined authority. This model works well for mature workflows where the routine cases are well understood and the exception cases are clearly defined.

Model 3: Audit-Based Oversight

The agent operates with full autonomy, and humans review actions after the fact through audit logs and quality sampling. This model is appropriate only for low-risk, high-volume tasks where the cost of occasional errors is lower than the cost of human review: content tagging, log classification, data enrichment.

Start with approval gates for every new workflow. Move to exception-based escalation only after the agent has demonstrated consistent accuracy over a meaningful sample size (at least 200 tasks). Move to audit-based oversight only for workflows where the error cost is quantifiably low and the volume makes human review impractical.

7. Case Studies: What Actually Worked

Systems requiring full human approval delivered only 30%.

Stanford Enterprise AI Playbook Findings

Stanford's Digital Economy Lab studied enterprise AI agent deployments and found that the highest-performing systems shared three characteristics: they targeted narrow, well-defined workflows rather than broad automation; they implemented graduated autonomy (starting with approval gates and widening scope as confidence grew); and they invested in observability from day one rather than adding it after production incidents forced the issue.

The productivity data is striking. Systems with 80% or higher AI autonomy (exception-based escalation or audit-based oversight) delivered a median 71% productivity gain. Systems requiring full human approval for every action delivered only 30%. The difference is the operational overhead of approval gates: when humans must review every action, the agent becomes a suggestion engine rather than an autonomous system.

Specific Deployments

Three deployment patterns appeared repeatedly in successful case studies:

Single-agent, single-workflow. One agent handles one well-defined workflow end to end. A customer support agent that processes refund requests, or a code review agent that analyzes pull requests against a defined style guide. These deployments succeed because the scope is narrow enough to test exhaustively and the failure modes are predictable.

Pipeline agents with validation gates. A sequence of agents, each handling one step of a multi-step process, with automated validation between steps. A content pipeline where one agent researches, another drafts, a third edits, and automated checks verify quality at each handoff. This pattern works because each agent can be tested and improved independently.

Incremental rollout with rollback. Teams that deployed agents one workflow at a time, with explicit rollback procedures and success criteria for each expansion, reported higher reliability than teams that launched multiple agents simultaneously. The incremental approach generates operational knowledge that compounds across deployments.

8. From Platform Engineering to Agent Governance

The operational challenges of running agents in production are, at their core, platform engineering challenges. Identity management, permission enforcement, audit logging, deployment orchestration, and observability are all problems that platform teams have solved for microservices. The question is how to adapt those solutions for autonomous systems that make their own decisions.

This is the problem space we are working on at Scaletific. The Agent Enforcement Plane (AEP) provides governance infrastructure that sits between your agents and your production systems: contract-based execution validation, permission enforcement, and audit trails that apply consistently across every agent in your fleet, regardless of the framework they use.

The AEP model treats agent governance as an infrastructure concern rather than an application concern. Just as a service mesh handles mTLS, rate limiting, and observability for microservices without requiring changes to application code, an enforcement plane handles identity, permissions, and audit for agents without requiring changes to agent logic.

Learn more about how the Agent Enforcement Plane works and how it fits into your existing platform engineering stack at scaletific.com.

9. The Production Readiness Checklist

Ten patterns that production-ready agent deployments share, organized across five dimensions:

Architecture

- Start with the simplest pattern that solves the problem. Use Anthropic's complexity ladder as a decision framework, and move up only when the current level demonstrably fails.

- Isolate context per agent. In multi-agent systems, each agent should operate with its own context window, receiving only the information relevant to its task.

Reliability

- Implement iteration caps and timeout gates on every agent loop. Define maximum step counts, maximum token budgets, and maximum wall-clock time per task.

- Validate tool outputs before acting on them. Treat every tool response as untrusted input and validate it against expected schemas before using it in downstream reasoning.

Observability

- Capture reasoning traces for every task. Record the full chain of thought, action, and observation so that failures can be investigated after the fact.

- Track tool call success rates and task completion metrics, not just token counts. Token usage is a cost metric; tool success and task completion are quality metrics.

Governance

- Assign every agent a verifiable identity with scoped permissions. Agents should authenticate with the same rigor as human users.

- Produce immutable audit trails for every action. Every tool call, every decision, and every escalation must be logged in a format that supports both incident investigation and compliance review.

Organization

- Deploy agents incrementally, one workflow at a time, with explicit rollback procedures and success criteria for each expansion.

- Start with approval gates for every new workflow. Graduate to exception-based escalation only after the agent demonstrates consistent accuracy over a meaningful sample.

Conclusion

The agents that survive production share a common profile. They are simple in architecture, narrow in scope, and wrapped in operational infrastructure: observability, governance, and human-in-the-loop escalation. They are deployed incrementally, monitored continuously, and governed by explicit contracts rather than implicit assumptions.

The research from Anthropic, Stanford, and Microsoft converges on the same principle: start with the simplest pattern that solves the problem, invest in observability and governance from the beginning, and expand scope only when the current deployment proves reliable. The teams that follow this principle report higher reliability, faster iteration, and lower operational costs than those that launch ambitious multi-agent systems on day one.

Production readiness is a spectrum, and the checklist above provides a roadmap. Start at the top, work your way down, and treat each item as a gate rather than a suggestion. The agents that survive production are the ones built by teams that took the operational engineering as seriously as the model engineering.

For MCP server directories, registries, and meta-indexes, browse the MCP Directories index.

The patterns above hold up because the framework underneath them was chosen on purpose. For the framework selection layer, see our framework comparison across LangChain, CrewAI, AutoGen, and LangGraph. For the discipline that decides which workflows are worth automating in the first place, see how to audit a workflow before you automate it.