Generative-AI traffic to U.S. retail sites grew 693% year on year over the 2025 holiday season. AI-sourced shoppers convert 31% better than non-AI referrals. ChatGPT alone receives 84 million shopping questions per week from U.S. consumers, and AI Overviews now appear on 14% of all shopping queries, up from 2.1% sixteen months earlier. The shopping channel has moved inside the assistant. Most stores have not noticed.

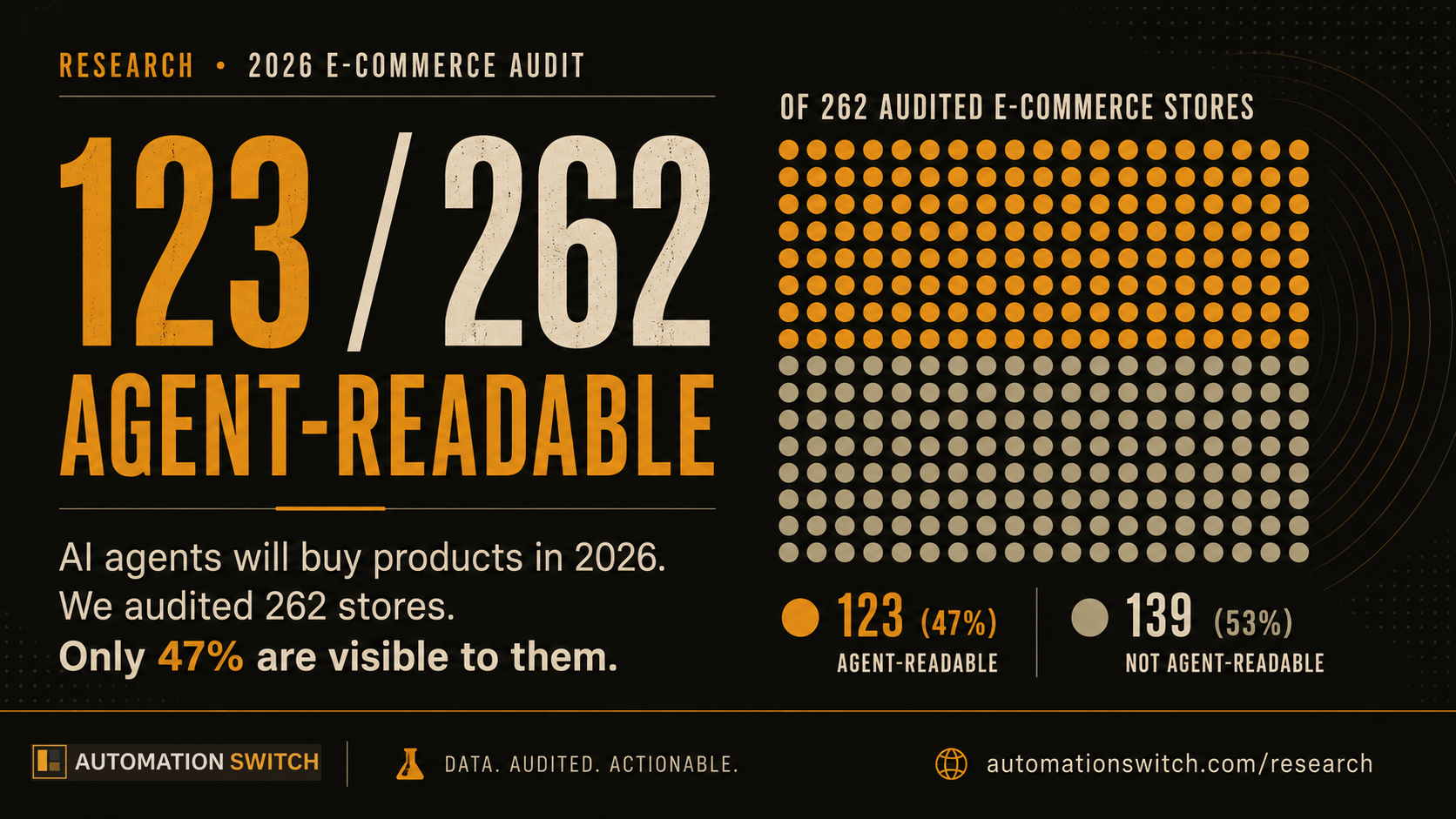

We audited 262 e-commerce stores across six platforms to test whether AI shoppers can actually find their products. The result is the headline above. Forty-seven percent of stores let an AI agent reach a product detail page with structured product data. On BigCommerce, one in 33. The agent-readable e-commerce problem is structural, not signal-weakness. The checkout layer for AI shopping (x402, ACP, AP2) is being built right now, and we will cover it in upcoming articles. This piece is about whether your store will even be recommended in the first place.

We will tell you which platforms made it through, which household-name brands the audit could not read, the five fixes that lift any store by roughly forty points in a single sprint, and the dataset behind every number. Every signal we measured is detectable in code. Every signal is fixable in an afternoon. The agent shopping era is starting whether your store is ready for it or not.

Across our 262-store audit. On BigCommerce, the figure is 3 of 100.

Why we audited e-commerce

We published Wave 1 of the Scaletific Agent-Readability Index (SARI) two days ago. That audit covered 103 publisher sites and produced a median score of 57 out of 100. The publisher wave was about citation traffic. Who gets quoted by Claude, ChatGPT, Perplexity, Gemini, and Bing when readers ask a question. Wave 2 is about something else. Wave 2 is about revenue. When AI agents recommend a product, the store on the other end either takes the conversion or loses it to a competitor that was easier to read.

We audited 262 stores across six platforms. Shopify, WooCommerce, BigCommerce, Squarespace, Magento, and Wix. The sample is fingerprint-validated. Every domain in our dataset emitted live platform markers we could detect from the homepage HTML, so the cohort labels reflect what is actually running today, not what a stale listicle said three years ago. The full audit pipeline is open-source. Anyone can re-run it.

The case for measuring this now: U.S. online holiday sales reached $257.8 billion in the 2025 season, growing 6.8% year on year, with 25 days exceeding $4 billion in single-day spend (Adobe Analytics, January 2026). Bounce rates for AI-arriving shoppers are 33% lower than for shoppers from other channels. Forty-six percent of consumers say they trust AI-generated results, and 55% actively click on the links AI assistants suggest. This is no longer the future channel. It is the present channel, and most stores have not been audited for it.

JSON-LD on every article is generated from the document, not handwritten. Article, Product, FAQPage, ItemList, and BreadcrumbList all emit on render. The sitemap is a dynamic Next.js route that picks up new articles within an hour of publish, fed by a hygiene test that fails the PR if the sitemap regresses. llms.txt is generated from a script that reads our CMS, and we run it after content merges. The next guardrails on our list: JSON-LD validity gating in CI, llms.txt freshness automation, and an end-to-end SARI baseline check that fails the build if our own site drops below the rubric. We will retest the entire site against this rubric in 90 days and publish what changed.

How we measured

The Scaletific Agent-Readability Index is deterministic. Every signal we score is binary or near-binary, and every signal is detectable in code. Two auditors running the same script on the same site produce the same score. No human judgement enters the rubric. The full methodology and the audit code are published alongside this article.

The 100-point score is the unweighted sum of five categories. Three of the five carry over from Wave 1. Two are e-commerce-specific.

| Criteria | Max | What an agent gains |

|---|---|---|

| Discovery (universal) | 25 | Can find the store's content map without crawling blindly |

| Page Structure (Product) | 30 | Can parse a product detail page and quote price, availability, shipping, variants, reviews |

| Identity & Attribution | 20 | Can attribute the product to a real brand with an entity-resolvable identifier |

| Content Addressability (universal) | 15 | Can cite a specific span on a page, not just the page URL |

| AI Bot Policy Clarity (universal) | 10 | Receives a declared, differentiated position on AI access |

The Discovery and Content Addressability and AI Bot Policy categories carry over from publishers unchanged. The Page Structure and Identity & Attribution categories are new for e-commerce. We rebuilt them around the test "could an AI assistant give a better answer to a real shopper because of this signal?" If the answer is no, we excluded the signal. That ruled out signals that exist purely for Google rich-snippet rendering: priceValidUntil, OG product tags, mainEntityOfPage. We kept signals that change what the assistant can actually say to a user: shippingDetails, hasMerchantReturnPolicy, hasVariant, gtin, FAQPage co-located on the product detail page.

Page Structure (30 points)

- Product JSON-LD present on the PDP (6 points). The agent has structured data to parse instead of inferring from prose.

offers.priceandoffers.priceCurrency(4 points). The agent can quote price authoritatively.offers.availability(3 points). The agent can answer "is it in stock right now".aggregateRatingwithratingValueandreviewCount(4 points). The agent can quote social proof: 4.6 from 2,341 reviews.brandstructured as Brand or Organization (3 points). The agent disambiguates manufacturer from retailer.imagepresent on Product (3 points). Agents surface visuals and use CLIP-style retrieval.shippingDetailsorhasMerchantReturnPolicy(4 points). The agent can answer "does it ship to UK" or "what is the return window".hasVariant,ProductGroup, ormodel(3 points). The agent can match "in size medium" or "in blue".

Identity & Attribution (20 points)

- Organization JSON-LD on the homepage (5 points). The agent knows who runs the store.

- Organization

sameAswith 2 or more entries (4 points). The agent links the store to the entity graph (Wikidata, LinkedIn, X, Wikipedia). - GTIN present on the PDP (4 points). Globally entity-resolvable product identifier. Same GTIN across retailers means the same physical SKU; agents can cross-reference price and availability.

- SKU or MPN only, no GTIN (1 point partial). Site-local identifier. Useful inside the store, not resolvable across the open web.

- Reviews structured as Review with author and reviewRating (4 points). The agent can quote individual reviews, not just the aggregate.

FAQPageco-located on the product detail page (3 points). The agent answers PDP-specific questions like wash care, shipping window, return window from structured Q&A.

What does not change between publishers and e-commerce

The 50 universal points are identical. Whether you publish articles or sell products, the same discovery signals apply: an llms.txt file, a discoverable sitemap, an MCP well-known endpoint, robots.txt directives that distinguish training crawlers from citation crawlers, stable canonical URLs, anchored headings, and an identifiable main content block. The bot taxonomy below applies to every site, every vertical. That is the universal layer.

The aggregate findings

Half the score of the publisher wave (median 57). E-commerce is roughly half as agent-readable as publishing.

Of 262 audited stores:

- 5 (1.9%) have an llms.txt file at the root.

- 2 (0.8%) have an MCP well-known endpoint.

- 240 (91.6%) have a discoverable sitemap. Universal.

- 86 (32.8%) declare any AI bot directive in robots.txt.

- 0 of 262 stores apply a differentiated policy between training crawlers and citation crawlers.

The zero is the most important number on this list. In the publisher wave, 8 of 103 sites (7.8%) operated a differentiated bot policy. Across 262 e-commerce stores we audited for Wave 2, zero do. The most-blocked AI crawler is CCBot (Common Crawl), disallowed by 81 stores. The next four most-blocked are ClaudeBot, Applebot-Extended, Google-Extended, and GPTBot, each disallowed by 80 stores. OAI-SearchBot appears in robots.txt on 33 stores (31 Disallow, 2 Allow). PerplexityBot appears on 30 stores (28 Disallow, 2 Allow). Claude-SearchBot is named in the directives of just one store. Stores that block training also block citation, even though the assistants treat these as independent decisions.

PDP signal rates (across 490 product detail pages sampled)

| Criteria | Adoption rate |

|---|---|

| Product JSON-LD present | 72.9% |

| Image on Product | 67.8% |

| Availability present | 40.4% |

| Brand structured | 30.6% |

| Offers with price and currency | 29.4% |

| Has variant (ProductGroup or hasVariant) | 10.4% |

| AggregateRating with ratingValue and reviewCount | 9.2% |

| GTIN present | 6.3% |

| Reviews structured (Review with author and rating) | 3.7% |

| FAQPage co-located on PDP | 2.9% |

| shippingDetails or hasMerchantReturnPolicy | 0.4% |

| Canonical match (URL displayed equals JSON-LD canonical) | 91.6% |

| Anchors on 50%+ of headings | 2.2% |

Three numbers stand out. Product JSON-LD is on 72.9% of sampled PDPs, which is high compared to the open-web JSON-LD baseline of 41% (HTTP Archive's 2024 Web Almanac, 16.9 million websites). E-commerce as a category is ahead on the headline signal. The shipping and returns figure is 0.4%. Google added shippingDetails and hasMerchantReturnPolicy to the soft-requirement list for free product listings in September 2023 and refreshed the guidance in February 2026. Two and a half years after Google asked for it, almost no one is emitting it. The FAQPage figure on PDPs is 2.9%, and the GTIN figure is 6.3%. The agent-readability problem is not at the headline JSON-LD layer. It is at the granular signal layer that lets an agent answer the next question a shopper asks.

The visibility funnel (the headline finding)

The cohort medians tell one story. The visibility funnel tells the more important one.

We started with 262 curated stores, all fingerprint-validated. We then asked three questions per store. Could the audit reach the homepage and read the sitemap? Could the audit sample a product detail page from that sitemap? Did any of the sampled PDPs emit Product JSON-LD?

Each question filters the population. The funnel is how many stores survive all three filters.

47%. Of the other 53%, 93 stores returned low-confidence (no PDP sampled at all), and 46 reached pages with zero Product JSON-LD. We call the second group the audit-blind cluster.

| Criteria | Curated | Reachable | PDPs with schema | Agent-readable % |

|---|---|---|---|---|

| Shopify | 50 | 46 | 40 | 80% |

| Wix | 50 | 38 | 38 | 76% |

| Squarespace | 50 | 29 | 24 | 48% |

| WooCommerce | 45 | 25 | 15 | 33% |

| Magento | 34 | 26 | 5 | 15% |

| BigCommerce | 33 | 5 | 1 | 3% |

The cohort gap is structural, not editorial. A store on Shopify is roughly 26 times more likely to be agent-readable than a store on BigCommerce. The store operator did not choose that ratio. The platform chose it for them. Shopify Plus integrated with the Agentic Commerce Protocol at platform level in September 2025. ACP and AP2 integrations on BigCommerce and Magento are merchant-by-merchant or absent. The gap widens, not narrows.

What the funnel does not measure: whether a store has a Google Merchant Center feed or an OpenAI Product Feed (via ACP). Those are dedicated commerce surfaces that AI shopping assistants do consume. We address the feeds-versus-schema question below. The funnel measures the open-web surface that an agent crawls when the dedicated feed is missing, stale, or out of scope for the query. Both surfaces matter. This audit measures the second.

The audit-blind cluster

Forty-six stores in our dataset returned reachable pages (the audit hit a 200 OK on the homepage and a sitemap entry) but failed to emit Product JSON-LD on any sampled product detail page. They scored between 10 and 24 out of 100. Most of them score 15. We call this the audit-blind cluster. The pattern is consistent: the sitemap exposes category or content URLs in the standard product-path positions, the WAF returns a captcha challenge instead of a product page, or the PDP renders client-side and the audit's standard HTTP fetch sees an empty React shell.

These are not small stores. The cluster includes some of the most recognisable consumer-brand domains in the dataset.

- The audit could not sample a product detail page on

gymshark.com. Scored 15 of 100. - The audit could not sample a product detail page on

us.christianlouboutin.comoreu.christianlouboutin.com. Both scored 15. - The audit could not find structured product data on the sampled pages from

store.liverpoolfc.com,usa.canon.com,lladro.com,coca-colastore.com,lindtusa.com,templespa.com, orclosetlondon.com. All scored between 15 and 28. - The audit could not sample a product detail page on

porterandyork.com. Scored 10, the lowest in the dataset. - Storefronts where the audit could not reliably sample any product detail page, including

spanx.com,harveynichols.com,fredperry.com, andrichersounds.com, fell into the low-confidence bucket of 93 stores below the 46-store audit-blind cluster. Likely causes: JS-heavy PDP rendering, bot-protection layers, or sitemap layouts the audit could not resolve to a product page.

Likely causes vary store to store: WAF challenge from DataDome or Cloudflare or Akamai, JS-only rendering on the PDP, non-standard sitemap layouts that expose category URLs in the slot where product URLs would normally live. The audit does not assign blame. It records what an AI agent following the same discovery path would also experience.

We ran the audit against each store's public sitemap and three sampled product detail pages on 10 May 2026. If your site has changed since, or if you have improved any of the signals in our rubric, contact us and we will re-run. The dataset is timestamped and versioned. Re-audits get a new date stamp, and the published numbers update.

Top 10 and bottom 10

Top of the dataset by SARI score. Five of the top ten run on Shopify Plus or WooCommerce.

| Criteria | Score | Platform |

|---|---|---|

| 01. Endoca (endoca.com) | 65.0 | WooCommerce |

| 02. Saratoga Wine (saratogawine.com) | 63.0 | WooCommerce |

| 03. Blueland (blueland.com) | 58.0 | Shopify |

| 04. Buffy (buffy.co) | 51.0 | Shopify |

| 05. Alex and Ani (alexandani.com) | 49.0 | Shopify |

| 06. Bliss World (blissworld.com) | 47.0 | Shopify |

| 07. Magic Spoon (magicspoon.com) | 47.0 | Shopify |

| 08. Outdoor Voices (outdoorvoices.com) | 47.0 | Shopify |

| 09. Fashion Nova (fashionnova.com) | 44.0 | Shopify |

| 10. Get Olympus (getolympus.com) | 44.0 | Magento |

The top scorers share a profile. They emit Product JSON-LD with structured offers, availability, brand, and image on every sampled PDP. They have an Organization on the homepage. Most carry sameAs links to at least two entity-graph nodes. Five stores in the dataset emit aggregateRating, structured Review, and Brand together on a sampled PDP: Endoca, Saratoga Wine, Aheadworks, 100percentpure, and Burrow. Endoca and Saratoga Wine are the top two scorers in that group. It is the rarest combination in the rubric, and it is why a small WooCommerce CBD oil retailer and a regional wine merchant outscore Coca-Cola, Liverpool FC, and Christian Louboutin.

| Criteria | Score | Platform |

|---|---|---|

| Porter & York (porterandyork.com) | 10.0 | WooCommerce |

| Caesarstone US (caesarstoneus.com) | 15.0 | WooCommerce |

| Canon US (usa.canon.com) | 15.0 | Magento |

| Christian Louboutin US (us.christianlouboutin.com) | 15.0 | Magento |

| Swedol (swedol.se) | 15.0 | Magento |

| Liverpool FC Store (store.liverpoolfc.com) | 15.0 | Magento |

| Ruggable (ruggable.com) | 15.0 | Shopify |

| Performance Health (performancehealth.com) | 15.0 | Magento |

| Lladró (lladro.com) | 15.0 | Magento |

| Hartsofstur (hartsofstur.com) | 15.0 | Magento |

The audit-blind cluster skews Magento. Eight of these ten lowest scorers run on Magento Open Source or Adobe Commerce. The pattern is consistent with what the visibility funnel showed earlier: Magento sites tend to expose category URLs (/catalog/category/view/id/N) in their sitemaps and serve the actual product pages from non-standard slots that the audit cannot reach without manual configuration. An AI agent crawling these stores hits the same wall. The agent does not get to the product.

The picture changes once you filter to stores where the audit did parse a real product detail page. The bottom 10 among those 123 stores are all Wix storefronts scoring 18.3 to 24.0 (internationaldiamondimporters.com at 18.3, then nine stores tied at 24.0 including ayeletraziel.art, beckandcap.com, blacksheepbikes.com, coalandcanary.com, copperandbrass.net, furrynecks.co.uk, haircomesthebride.com, handlebend.com, hdoeuvre.com). Wix surfaces a product detail page reliably, which is why most Wix stores cleared the visibility funnel; the trade-off is a low signal ceiling without operator intervention.

What about Google Merchant Center and the OpenAI Product Feed?

A fair question came up while we were preparing this article. ChatGPT Instant Checkout consumes the OpenAI Product Feed (codified in the Agentic Commerce Protocol). Google Gemini and AI Overviews consume the Google Merchant Center feed. Microsoft Copilot is integrating with the Microsoft Merchant Center feed. If a retailer is wired into the dedicated commerce surfaces, do they still need to worry about on-site Product JSON-LD?

Three points keep the visibility funnel relevant even when the feeds are in place.

- The dedicated feeds are not universal. Not every retailer is in Google Merchant Center. Not every retailer is ACP-integrated yet. Stripe and OpenAI launched ACP on 29 September 2025, and Walmart joined the same month. Salesforce Agentforce Commerce integrated on 14 October 2025. The protocol is months old, and the rollout is in motion. When agents cannot find a dedicated feed, they fall back to crawling.

- AI Overviews are not authoritative on Merchant Center alone. Google's AI Overviews surface schema-marked content from across the open web, not just Merchant Center listings. According to Visibility Labs' analysis of 20.9 million shopping keywords in March 2026, AI Overviews appear on 83% of "best [product]" queries, 35% to 45% of review and comparison queries, and 13% to 14% of transactional queries. For 83% of "best [product]" queries, the answer pulls from review sites, buying guides, and on-site product pages, all of which require parseable schema.

- The feeds do not solve the structural problem. The audit measures whether an agent can find a PDP in the sitemap, fetch it, and parse structured data. A WAF-blocked site or a JS-only-rendered PDP fails the audit even if the merchant is in every feed. The visibility funnel is downstream of the feed question, not parallel to it.

The argument we are not making: that schema replaces dedicated commerce feeds. The argument we are making: that schema is the floor. Stores that fail the agent-readability audit are also the stores most likely to have inconsistent or missing feed integrations, and the worst-case fallback for an agent is to crawl your site. If your site cannot be crawled, you are not in the answer.

The five fixes that lift any store by forty points

The job of every e-commerce operator from now on is to be on the other end of every agent-relevant shopping question. The reader's question arrives, the assistant retrieves and quotes someone, and the only contestable variable is whether your store made it possible to be that someone.

The five changes below are how you make it possible. They are sequenced by point recovery.

- Add an

llms.txtfile at the root of your domain. 10 points. One file. Half a day. 98.1% of the audited cohort is missing this. Five of 262 stores have one. - Audit your

robots.txtfor differentiated AI policy. Up to 10 points. The fix is a paragraph of explicit Allow and Disallow rules across the AI bot taxonomy at the bottom of this article. Zero of 262 stores have this today. - Make every PDP carry valid Product JSON-LD with

offers,availability,aggregateRating,brand, andimage. Up to 14 points. 27.1% of audited PDPs have no Product JSON-LD at all. Among the 72.9% that do, the granular signals (shippingDetails,hasVariant,aggregateRatingwith both fields) are missing on the majority. - Emit

shippingDetailsandhasMerchantReturnPolicy. 4 points and Google's free product listings eligibility. Google asked for this in September 2023. 99.6% of audited PDPs still do not emit it. Recent Google guidance allows an Organization-level shipping and return policy that PDPs can reference, which reduces the per-PDP burden. - Add a

FAQPageJSON-LD object co-located on the PDP plus aGTINon the Product. 7 points combined, plus answers to product-specific questions (wash care, shipping window, return process, sizing) that AI assistants quote directly to shoppers. 2.9% of PDPs have FAQ, 6.3% have GTIN.

Total: roughly forty points. Achievable in a single sprint. Every store in the top decile of our dataset has done at least four of these five.

We opened with a promise. This article would tell you which changes move your store the most. Here is the answer in one line. llms.txt at the root, plus differentiated training-versus-citation rules in robots.txt, plus structured Product JSON-LD with offers, availability, brand, image, and rating, plus shippingDetails and hasMerchantReturnPolicy, plus FAQPage and GTIN on the PDP. Forty points. One sprint. Every store in the top decile of our dataset has shipped at least four of these five.

What comes next: the agent-checkout layer

Three new protocols landed in 2025 that turn AI shopping from a discovery channel into a transaction channel. We will cover each in its own article. They are mentioned here only because the audit you have just read measures the layer underneath all of them. If your store fails the visibility funnel, no protocol upstack saves it.

x402 (Coinbase, May 2025). HTTP 402 Payment Required as an open standard for agent-mediated payments, settled in USDC over Base, Ethereum, Arbitrum, Polygon, and Solana. Moved to the Linux Foundation in late 2025.

Agentic Commerce Protocol (ACP) (Stripe + OpenAI, September 2025). Powers ChatGPT Instant Checkout. Live with Etsy, Shopify merchants, Walmart, Target, and Salesforce Agentforce Commerce from launch.

Agent Payments Protocol (AP2) (Google + Coinbase + 60+ partners, September 2025). Extends the Agent2Agent (A2A) protocol. Donated to the FIDO Alliance for ongoing governance in 2026.

The compounding asymmetry is straightforward. Shopify already supports ACP at platform level. BigCommerce and Magento integrations are merchant-by-merchant or absent. Combine 3% visibility on BigCommerce with no platform-level checkout protocol and the gap widens, not narrows. The visibility audit measures discovery. The checkout layer is the next measurement we will publish.

Read next: What x402 means for autonomous agents. The ACP versus AP2 comparison and the Magento and BigCommerce agent-checkout follow-up are forthcoming.

Why we publish this rubric

We are placing a bet at Automation Switch. Agent legibility is going to be the primitive for how AI agents and human shoppers navigate the open web. Product searches, comparison queries, recommendation flows, transactional intent: every one of them is moving through agents asking sites to surface what they sell. The store that returns the cleanest answer wins the recommendation, and the recommendation is increasingly the click. Then the conversion.

We will retest our own site against this rubric in 90 days and publish what changed. The next research wave (law firms, Wave 3) will follow the same six-step pipeline. Each subsequent wave widens the surface that agent-readable open-web commerce covers.

If we are right, the e-commerce operators who internalise this work over the next twelve months will be the ones whose products sit on the other end of every agent-relevant shopping question. Their stores will be in the answer. The other 53% will not.

The fix list is forty points. The audit is open. The dataset is published. Forty-seven percent of stores in our 262-store sample let an AI agent find a product page with structured product data today. That number will move. The question is which direction your store moves it.

Methodology, dataset, and reproducibility

The full SARI Wave 2 methodology, the audit script, the per-site result JSONs, the cohort medians, the visibility funnel, and the audit-blind cluster JSON are published in the article's companion repository at research/agent-legibility-audit/verticals/ecommerce/. Three notes for researchers and reviewers.

- The audit weights binary signals over interpretive ones. We do not score editorial AI-friendliness or any other subjective dimension. Two auditors get the same score. The full rubric, the verified-included signals, and the verified-excluded SEO-only signals (

priceValidUntil, OG product tags,mainEntityOfPage) are documented inmethodology.md. - Aggressive bot-protection posture or JS-heavy PDP rendering produces low-confidence scores in this audit. Of the 262 stores audited, 93 returned low-confidence because we could not reliably sample product pages. The pattern recurs on stores including

spanx.com,harveynichols.com,fredperry.com, andrichersounds.com. These stores may be highly cited by AI assistants through direct contractual relationships with operators (Google Merchant Center, OpenAI ACP, Microsoft Merchant Center). Our rubric measures the open-web surface; their effective agent-readable footprint may be higher than their SARI score implies. - The audited PDPs were sampled from each store's declared sitemap. Up to three product pages per store, 490 PDPs total across the 169 stores where the audit reached and parsed product pages (some stores yielded fewer than three reachable PDPs). Re-audits and corrections received after publication are recorded in

report.mdwith timestamps. We will re-run the entire Wave 2 dataset in 90 days.

Bot taxonomy sources (verified)

OpenAI: developers.openai.com/api/docs/bots

Anthropic: support.claude.com/en/articles/8896518

Perplexity: docs.perplexity.ai/guides/bots

Google: developers.google.com/crawling/docs/crawlers-fetchers/google-common-crawlers

Apple: support.apple.com/en-us/119829

Common Crawl: commoncrawl.org/ccbot

EU AI Act dates: digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai