Of twelve dedicated guides on Answer Engine Optimization and Generative Engine Optimization that we audited in May 2026, one mentioned the AI bots that decide whether your site can be cited. The other eleven told you to write FAQ-friendly content for crawlers your site keeps locked out.

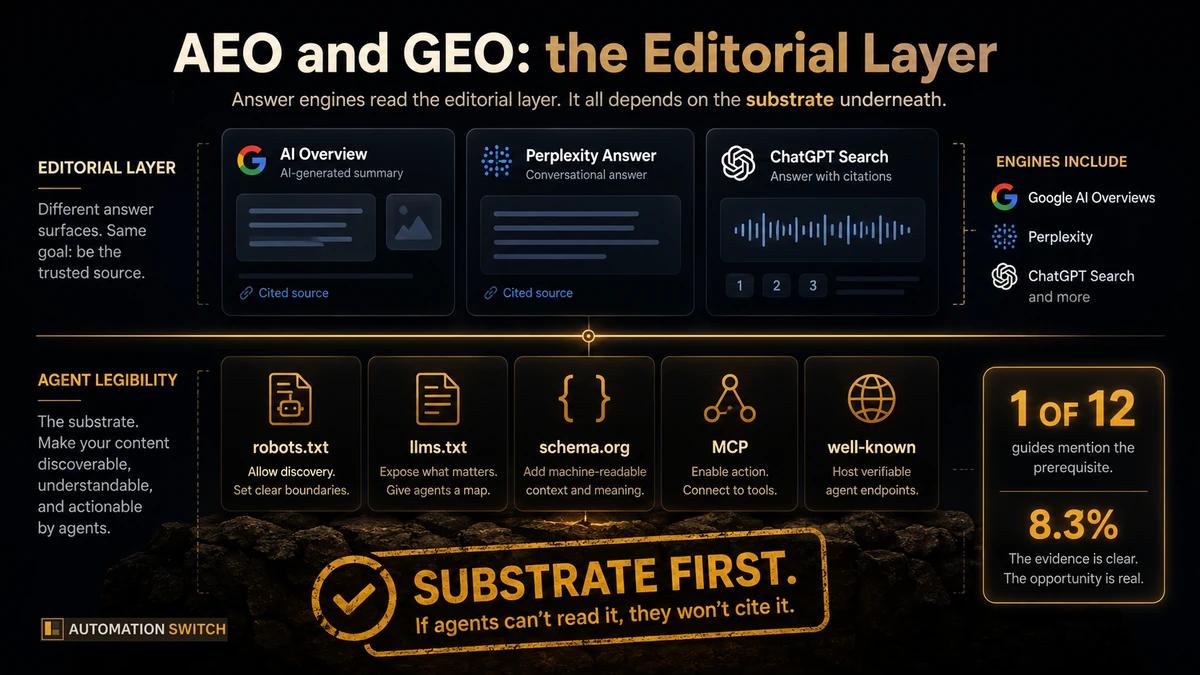

AEO and GEO are the editorial layer of AI search visibility. Agent Legibility is the substrate underneath. Most guides skip the substrate, which is why brands invest in answer-shaped content and still go uncited. This piece closes the gap. You will leave with a clear definition of AEO and GEO, the actual difference between them, the substrate-first checklist that lifts any site by forty SARI points, and the case study that proves the substrate produces the citations.

What is Answer Engine Optimization (AEO)?

Industry consensus on AEO is settled. HubSpot frames it as "the practice of improving how often and how accurately your business appears in AI-generated answers on AI engines like ChatGPT, Gemini, and Perplexity." Frase calls it "the practice of structuring and enhancing your content so that AI-powered search platforms select it as a cited source." Coursera adds the question-shaped framing: AEO targets queries like "How does X work?" and aims for the content that surfaces "as a direct answer to the search result." CXL and Yext converge on the same shape: making your content the answer engines select rather than another option in a list.

The unit of success shifts. SEO measures rank position. AEO measures citation selection. The visible surfaces are featured snippets, knowledge panels, voice assistant answers, and the citation links inside Google AI Overviews. Many of those impressions land as zero-click brand mentions: the user reads the answer and moves on with the brand registered, not the page visited. The CTR maths bend; the brand maths still work.

What is Generative Engine Optimization (GEO)?

GEO is the broader discipline. Semrush defines it as "the practice of optimizing your presence and content to appear in responses generated by AI-powered search systems such as ChatGPT, Google, Perplexity, Claude, and others." Moz emphasises the action verb: GEO "adapts your content to be cited, summarized, or featured inside AI-generated responses." Conductor names the surfaces directly: AI Overviews and LLMs. Writer adds the editorial frame: GEO is about "influencing what an AI engine thinks and says" when the model responds to a query.

GEO has an academic origin. Researchers at Princeton and IIT Delhi formalised the term in a 2024 paper accepted at KDD (Aggarwal et al., arXiv:2311.09735), where they introduced GEO as "the first novel paradigm to aid content creators in improving their content visibility in generative engine responses through a flexible black-box optimization framework." The same paper reports that GEO methods can boost visibility by up to 40 percent in generative engine responses, with the efficacy varying across domains.

AEO sits inside GEO. AEO is the answer-retrieval moment, scoped to the live citation surface. GEO is the broader discipline that also covers training-time presence (whether your content is in the corpus the model learned from), retrieval-time selection (whether the live citation crawler picks you), and the synthesis behaviour that decides what surfaces in the rendered answer.

SEO vs AEO vs GEO at a glance

The three disciplines target different signals on different engines on different time horizons. Seven dimensions cover the space.

| Criteria | SEO | AEO | GEO |

|---|---|---|---|

| Goal | Rank in the SERP and drive clicks | Be selected as the cited answer | Be incorporated and cited inside generated responses |

| Primary engines | Google, Bing | Google AI Overviews, Perplexity, ChatGPT search | ChatGPT, Claude, Gemini, Copilot, Perplexity |

| Ranking signal | Backlinks, on-page keywords, Core Web Vitals | Schema and FAQ markup, conversational structure, entity clarity | Authority, citations, semantic clarity, structured data, training-corpus presence |

| Traffic shape | Click-through to site | Often zero-click; brand surfaces in the answer surface | Brand referenced inside synthesised answer; click rate lower, intent higher |

| Weight on legibility | Implicit (Googlebot is universally accommodated) | Rarely discussed in the AEO corpus | Rarely discussed in the GEO corpus, with one exception we name below |

| Training-time vs retrieval-time | Retrieval-time | Retrieval-time | Both: training-time corpus inclusion and retrieval-time citation crawls |

| Measurement | Rankings, sessions, conversions | Citation rate, brand mentions in answers, AI-referred sessions | Brand mention volume, share of voice in AI answers, citation count |

The legibility row is where the analysis usually stops. The next section explains why that gap is empirically measurable and what to do about it.

The legibility prerequisite most guides skip

In May 2026 we ran a small audit of the AEO and GEO corpus itself. Twelve dedicated guides ranked at the top of Google for the canonical queries: HubSpot, Frase, Coursera, Try Profound, CXL, AIOSEO, Marcel Digital on the AEO side; Semrush, Moz, Optimizely, Conductor, Strapi on the GEO side. We scraped each one and grepped for any mention of the technical primitives that decide whether an AI engine can read the site at all: robots.txt, llms.txt, GPTBot, ClaudeBot, OAI-SearchBot, PerplexityBot, Google-Extended, Applebot, CCBot, Claude-SearchBot.

One guide hit. The other eleven had nothing.

Twelve top-ranked guides scraped via Firecrawl in May 2026. Strapi was the single hit, naming robots.txt, llms.txt, and IndexNow. Every marketing-led guide (Semrush, HubSpot, Moz, Coursera, Optimizely, Conductor, Frase, CXL, AIOSEO, Try Profound, Marcel Digital) skipped the substrate.

The guide that hit was Strapi, a developer-focused CMS source. Every marketing-led guide skipped the substrate entirely. Strapi names the split exactly: "robots.txt for Googlebot. llms.txt plus IndexNow pings for LLM crawlers." That is the prerequisite. Eleven of twelve guides assumed it had already been solved before they got to the editorial advice.

The numbers from our own publisher audit confirm the gap. Across 103 publisher sites we scored, the mean Scaletific Agent-Readability Index was 49.9 out of 100. Six sites had an llms.txt file at the domain root. Eight differentiated training crawlers from citation crawlers in robots.txt. The single fastest fix to lift any score by ten points was adding that llms.txt file. The full dataset and methodology are in We Audited 103 Publisher Sites for Agent Legibility.

The crawler taxonomy: training-time vs citation-time

Most AEO and GEO coverage talks about "AI crawlers" as a single category. There are at least two, and they answer different questions.

Training-time crawlers feed the corpus the model learns from. They decide whether your content is in the model's weights: GPTBot for ChatGPT, ClaudeBot for Claude, CCBot for Common Crawl (which feeds many models), Google-Extended for Gemini, Applebot for Apple Intelligence. Blocking these in robots.txt opts you out of training and corpus inclusion. Citation visibility stays a separate decision encoded against a different set of bot identities.

Citation-time crawlers fetch on demand when an AI engine is generating an answer that needs a fresh source. They include OAI-SearchBot for ChatGPT search, Claude-SearchBot for Claude, PerplexityBot for Perplexity, and Googlebot for AI Overviews. These bots run when a user asks a question. They are how an AI agent renders a citation that links back to your site.

The split matters because the editorial decisions are different. Blocking training-time crawlers protects your content from corpus inclusion in models you have not licensed. Blocking citation-time crawlers prevents you from being quoted live. Most publisher robots.txt files use a single User-agent: * rule that blocks both, which is rarely what the operator intended. The audit found this exact pattern repeatedly: sites that wanted citation visibility had inadvertently locked out the citation crawlers along with the training crawlers, often inherited from a template that pre-dated the AI-search era.

The other observation worth flagging: the AEO and GEO corpus rarely distinguishes the two crawler classes. The Digital Marketing Institute is the only mainstream comparison guide that names GPTBot and ClaudeBot inside the discussion, and it covers them in a single paragraph. The split between training-time and citation-time crawlers is, for now, a substrate-side observation that the editorial-side coverage has yet to absorb.

What to do first: substrate before strategy

Five fixes from the audit lift any site by roughly forty SARI points in one sprint. Run them before you touch a single piece of AEO or GEO copy.

- 01Add llms.txt at the domain root

About ten SARI points. Half an afternoon of work. The file declares the URLs you want AI agents to surface and the canonical descriptions the engines should use.

- 02Differentiate training crawlers from citation crawlers in robots.txt

Around eight points. Decide once whether you want corpus inclusion, citation visibility, both, or neither, then encode that decision per user-agent.

- 03Ship valid Article and FAQPage JSON-LD on every page that should be cited

Roughly ten points. Schema is what answer engines extract when they render a citation card.

- 04Add a well-known directory with declarative metadata

Around five points. Most platforms skip this; the presence of the directory signals that the site is configured for agent access. Skip if you publish through a hosted CMS that handles this for you.

- 05Test the result against the audit checklist

About five points if anything turns up that needs fixing. Repeat the test quarterly because pricing tiers, plan limits, and bot identities shift.

After all five, AEO and GEO copywriting starts paying back. Before all five, every editorial improvement compounds against zero on the legibility side.

Case study: 1,400 AI citations in 28 days

When Automation Switch earned 1,400 AI citations across a 28-day window in early 2026, the source was substrate, not copy. Schema, an explicit llms.txt at the domain root, an MCP server that lets agents query the site directly. The data and the methodology are in 1,400 AI Citations in 28 Days.

The reason that case study reads as proof for this article: the editorial layer of those articles was the same shape as anyone else's. The legibility prerequisite underneath them was the differentiator. AEO and GEO advice works on sites the agent can read. The advice falls flat on sites where the agent stays locked out.

What to do now

The promise of this piece was to close the gap left by the AEO and GEO corpus. Eleven of twelve dedicated guides told you the answer-engine and generative-engine playbook. One named the prerequisite. This article filled that gap.

The deliverable landed in four parts. The definitions: AEO is the answer-retrieval layer, GEO is the broader discipline that covers training-time corpus inclusion, retrieval-time citation, and synthesis behaviour. The actual difference between them: AEO sits inside GEO, scoped to the live citation moment. The substrate-first checklist: five fixes from the SARI audit that lift any site by roughly forty points in one sprint. The proof: 1,400 AI citations in 28 days from substrate, not copy.

Substrate first. Editorial second. AEO and GEO advice compounds on sites the agent can read; on sites where the agent stays locked out, every editorial improvement compounds against zero.

Run your site through the SARI Publisher Audit before you spend another quarter on AEO and GEO copywriting. The score tells you what to fix first. The five fixes lift the score by roughly forty points in one sprint. Then the answer-shaped content actually starts surfacing inside the AI engines you wrote it for.