Most publisher sites are invisible to AI assistants. The content is fine. The site fails the basic agent legibility tests an assistant runs before it cites a source.

We audited 103 publisher sites against ten signals across five categories. Every signal is detectable in code. Every signal is fixable in an afternoon. We called the rubric the Scaletific Agent-Readability Index (SARI), published the full dataset, and ran our own site through it before publishing.

The headline result will surprise you. The detail will tell you which five changes lift any site by roughly forty points in a single sprint.

Most are running on default settings. The default is invisible.

Why we audited publishers

We chose publishers because publishing is one of the niches Automation Switch works in, and because every operator tradeoff in the agent-readability space surfaces faster on a publisher's site than anywhere else. New article schema. New AI crawler routing in robots.txt. New questions about whether to gate paywalled content from training crawlers. The signal is loud, the surface is owned, and the change cycles are weekly.

The 103 sites span fifteen cohorts. Top-tier news (twenty), tech press (fifteen), business and finance (ten), reviews and service journalism (ten), indie longform (nine), culture and entertainment (six), and seven vertical pubs covering science, marketing, health, food, travel, sports, and trade. We also included three publishing platforms (Substack, Medium, Ghost) and two newsletter hybrids, because the platform you publish on increasingly determines what an agent can read about you. The full list with cohort tags ships alongside this article as a CSV.

Citations from language models are now as important as backlinks and Google search rankings. They feed the answers users see when they ask an assistant a question, and they feed the citations search engines surface in their AI Overviews. Operators who treat citation visibility as a separate game from SEO are leaving the most defensible distribution channel of the next decade on the table.

Practitioners and operators can use this research to plumb their own sites in preparation for when AI agents become the go-to surface for search queries. The five fixes in this article work whether you run a publication, a Shopify store, a SaaS marketing site, or a local services brand. Subsequent waves will publish vertical-specific rubrics; this one is the publisher baseline.

AI crawlers, in plain terms

Before the audit data, a primer. The taxonomy of AI crawlers is changing fast and is widely misreported. Three confusions in particular are costing publishers money and visibility:

- Blocking GPTBot is treated as if it removes the site from ChatGPT. It does not.

- Blocking Googlebot is treated as if it removes the site from AI Overviews. It does not.

- "Claude-Web" is treated as if it is Anthropic's current bot. It is not.

We will get to all three. First, the taxonomy.

Two jobs, sometimes split into three roles

Every AI crawler does one of two jobs.

A training crawler fetches your content to add to the dataset that trains a future model. Your content shapes the model's weights. At inference time, the model never returns to your page. You do not appear in the answer. You receive no referral traffic. The transaction is one-way.

A citation crawler (also called a retrieval, search, or live-fetch crawler) fetches your content at the moment a user asks the assistant a question. The assistant quotes you, names you, and links to you in the answer. The transaction is two-way. You give the answer; you receive the credit and the click.

A third role sits inside citation: the user-triggered fetcher. This bot only crawls when an individual user explicitly asks the assistant to read a specific URL. Operators document these separately because they behave differently from autonomous crawlers. Some explicitly state they do not honour robots.txt (since the user, not the operator, initiated the fetch).

The user-agent map

| Criteria | Operator | Type | Function |

|---|---|---|---|

| GPTBot | OpenAI | Training | Builds dataset for future GPT models |

| OAI-SearchBot | OpenAI | Citation | Surfaces sites in ChatGPT search answers |

| ChatGPT-User | OpenAI | User-triggered | Live fetch when a ChatGPT user asks for a URL |

| OAI-AdsBot | OpenAI | Ad validation | Validates ad landing pages submitted to ChatGPT |

| ClaudeBot | Anthropic | Training | Builds dataset for Claude models |

| Claude-User | Anthropic | User-triggered | Live fetch when a Claude user asks a question |

| Claude-SearchBot | Anthropic | Citation | Improves Claude's search result quality |

| PerplexityBot | Perplexity | Citation | Surfaces sites in Perplexity answers |

| Perplexity-User | Perplexity | User-triggered | Live fetch on user request |

| Googlebot | Search index | Powers Google Search; output feeds AI Overviews | |

| Google-Extended | Training | Opts the site out of Gemini training and grounding | |

| Google-CloudVertexBot | Site-owner-requested | Crawls for site-owner-built Vertex AI Agents | |

| Applebot | Apple | Search + AI | Powers Spotlight, Siri, Safari; data may train AI |

| Applebot-Extended | Apple | Training opt-out signal | Metadata-only; does not crawl |

| CCBot | Common Crawl | Open data | Open repository many AI labs use as training input |

Every row above is sourced from the operator's own documentation. The full list with verbatim quotes and primary-source URLs is published alongside this article.

Three traps publishers fall into

Googlebot is the search-index crawler. Blocking it removes the site from Google Search entirely. AI Overviews are downstream of Search, so they go too, but so does every organic Google referral. Almost no publisher actually wants this. The opt-out for Gemini training and grounding is Google-Extended. Blocking Google-Extended does not affect Google Search. Google's documentation says so explicitly: "Google-Extended does not impact a site's inclusion in Google Search nor is it used as a ranking signal in Google Search."

GPTBot is the training crawler. Blocking it keeps the site out of OpenAI's training data. It does not affect ChatGPT's ability to cite the site at query time. That role belongs to OAI-SearchBot. The publisher who blocks GPTBot and allows OAI-SearchBot is taking the most common sophisticated position: keep my work out of training, but let ChatGPT cite me when a user asks a relevant question. Both rules are independent and both are documented.

Anthropic no longer documents a Claude-Web user-agent. The current bots are ClaudeBot (training), Claude-User (user-triggered fetch), and Claude-SearchBot (citation). A robots.txt rule that targets Claude-Web accomplishes nothing.

The compliance backdrop

The EU AI Act sets relevant deadlines for publishers and operators alike. The first wave of obligations on providers of general-purpose AI models came into force on 2 August 2025. The next milestone, 2 August 2026, brings Article 50 transparency rules, the European Commission's enforcement powers, and the obligations applicable to high-risk AI systems.

For publishers, the practical implication is that operators must increasingly justify the data they trained on. An explicit robots.txt directive, dated and timestamped, is the artefact a publisher can point to if a future dispute arises.

How we measured

Before the rubric, the credibility check. We built it because we live it. AutomationSwitch itself is cited 3,400 times across Microsoft Copilots and partners in the last 30 days, with an average of 5 cited pages per query. The screenshot below is from Bing Webmaster Tools / AI Performance, taken on the day this article published.

That is what passing the rubric looks like in practice. The five categories below are the tests we apply to ourselves before we apply them to anyone else.

JSON-LD on every article is generated from the document, not handwritten. Article, FAQPage, ItemList, and BreadcrumbList all emit on render. The sitemap is a dynamic Next.js route that picks up new articles within an hour of publish, fed by a hygiene test that fails the PR if it regresses. llms.txt is generated from a script that reads our CMS, and we run it after content merges. The next guardrails on our list: JSON-LD validity gating, llms.txt freshness in CI, and an end-to-end SARI baseline check that fails the build if our own site drops below the rubric. We will retest the entire site in 90 days and publish what changed.

The Scaletific Agent-Readability Index is deterministic. Every signal we score is binary or near-binary, and every signal is detectable in code. Two auditors running the same script on the same site produce the same score. No human judgement enters the rubric. The full methodology and the audit script are published alongside this article.

The 100-point score is the unweighted sum of five categories.

| Criteria | Max | What an agent gains |

|---|---|---|

| Discovery | 25 | Can find the site's content map without crawling blindly |

| Article Structure | 30 | Can parse a single article and extract intent, author, and timing |

| Identity & Attribution | 20 | Can attribute claims back to a verifiable author and publisher |

| Content Addressability | 15 | Can cite a specific span, not just the page |

| AI Bot Policy Clarity | 10 | Receives a declared, differentiated position on AI access |

Within each category, signals are listed below in order of point weight (most important first). For the underlying detection logic, see the published methodology.

Discovery (25 points)

- /llms.txt at the root (10 points): a declarative file that points agents at the URLs you want surfaced.

- /sitemap.xml at the root (5 points): the universal "here is everything" file.

- robots.txt addresses AI crawlers explicitly (5 points): a clear position, allow or block, beats silence.

- /.well-known/mcp.json or equivalent MCP discovery endpoint (5 points): lets agents reach an MCP interface to your content.

Article Structure (30 points)

- JSON-LD block in head (8 points).

- @type is Article, NewsArticle, BlogPosting, or Report (5 points).

- author is a structured Person object (5 points).

- datePublished and dateModified both present (5 points).

- publisher is an Organization with logo (4 points).

- headline in JSON-LD matches the page title (3 points).

Identity & Attribution (20 points)

- Author has a dedicated on-domain profile URL (5 points).

- Author profile page carries Person markup (5 points).

- Publisher Organization has a sameAs array with at least 2 entries (5 points).

- Article canonical URL matches the displayed URL (5 points).

Content Addressability (15 points)

- Stable URL pattern, no session params or UTM in canonical (5 points).

- H2 and H3 headings carry id attributes for anchor links (5 points).

- speakable schema or single identifiable main content block (5 points).

AI Bot Policy Clarity (10 points)

We score this category for differentiated treatment, not just any directive.

- Explicit directive on at least one training crawler (3 points).

- Explicit directive on at least one citation crawler (3 points).

- Differentiated treatment between training and citation (4 points): non-identical rules across the two classes.

A site that blocks every AI bot identically scores 6 of 10 here. A site silent on all of them scores 0. A site that blocks training and allows citation scores the full 10.

The aggregate findings

Median is 57. Range is 15 to 81. The publisher web is, on average, below half its agent-readability potential.

Discovery is broken at the front door

Of the 103 audited publishers:

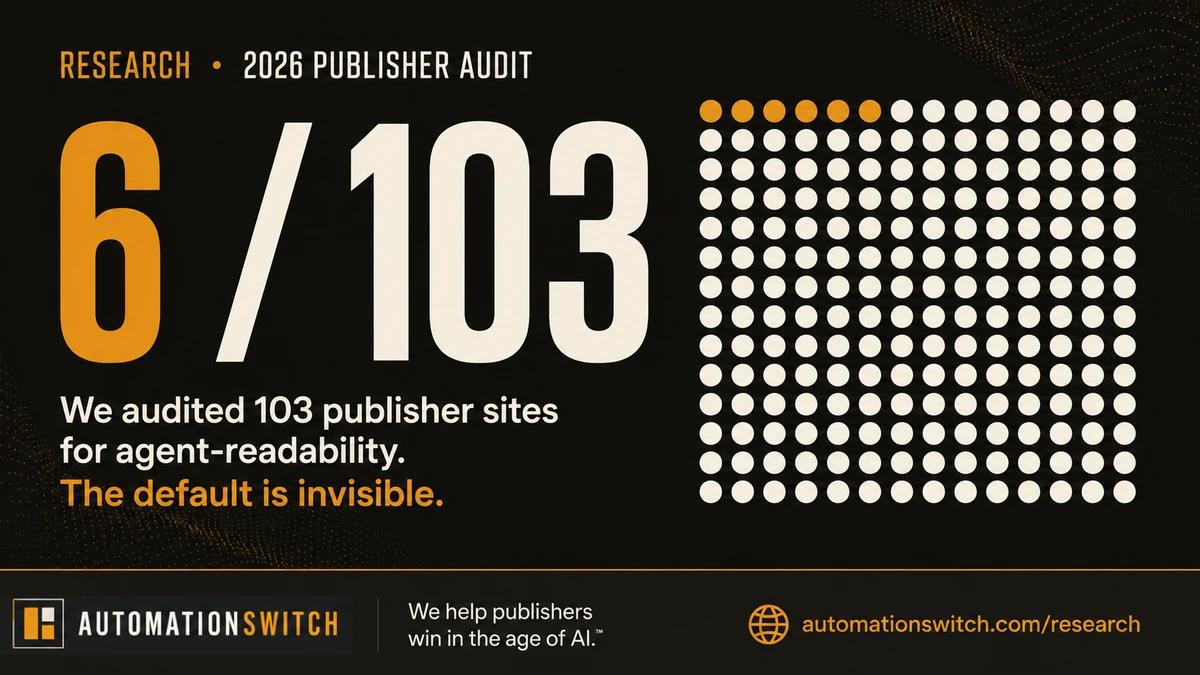

- 6 (5.8%) have an llms.txt file.

- 5 (4.9%) have an MCP well-known endpoint.

- 100 (97.1%) have a discoverable sitemap.

- 75 (72.8%) declare any AI bot directive in robots.txt.

The sitemap is universal. Everything else is rare. Two files (llms.txt and the MCP well-known endpoint) cost an afternoon each to build, and neither is present on more than a handful of sites.

The big finding: AI policy is performative, not differentiated

The other 67 apply blanket "block everything" or "allow everything" rules. They are turning away citation traffic alongside the training takeaway, or accepting both indiscriminately.

This is the most important finding in the dataset. Seventy-three percent of the audited cohort has at least one AI bot directive. But fewer than one in nine of those directives meaningfully differentiate training crawlers from citation crawlers. Publishers are turning away citation traffic without intending to, because the same rule that blocks GPTBot also blocks OAI-SearchBot.

The most-blocked AI crawler is CCBot (Common Crawl), with 64 publishers disallowing and only 4 allowing. The next four most-blocked are ClaudeBot, Google-Extended, Applebot-Extended, and PerplexityBot. Notably, OAI-SearchBot is the rarest crawler in the dataset to receive a directive at all (only 25 publishers, 24%). Most have not bothered to address citation crawlers specifically. Of those 25 directives, 6 are Allow.

Nine publishers explicitly Allow GPTBot. The cluster lines up with publishers that have direct content licensing agreements with OpenAI: training is paid for, so the door is open by contract. The rest disallow.

Article structure is uneven

| Criteria | Adoption |

|---|---|

| JSON-LD present in <head> | 69.3% |

| author is a structured Person object | 59.3% |

| Publisher Organization with logo | 41.9% |

| Publisher sameAs array (≥ 2 entries) | 8.9% |

| Article canonical URL matches displayed URL | 88.9% |

| Headline in JSON-LD matches page title | 53.3% |

| Identifiable main content block | 89.6% |

| H2/H3 anchors at 50%+ of headings | 11.1% |

Three numbers stand out:

- 31% of articles have no JSON-LD at all. The agent has nothing to parse but unstructured prose.

- Heading anchors are the rarest signal. Eighty-nine percent of articles cannot be cited at the span level. The agent's best citation is the page URL.

- Publisher identity is barely linked. Only 9% of publishers connect their Organization to a Wikipedia entry, LinkedIn page, or other entity record via sameAs. Identity attribution stalls at the domain.

Findings by cohort

| Criteria | n | Median | Min | Max |

|---|---|---|---|---|

| culture-entertainment | 5 | 69.0 | 60.0 | 81.0 |

| vertical-travel | 3 | 67.0 | 61.0 | 69.0 |

| industry-trade | 2 | 62.5 | 62.0 | 63.0 |

| vertical-sports | 1 | 62.0 | 62.0 | 62.0 |

| top-tier-news | 19 | 60.5 | 20.0 | 73.0 |

| tech | 14 | 60.5 | 28.0 | 75.0 |

| business-finance | 9 | 57.3 | 15.0 | 75.0 |

| vertical-marketing | 4 | 47.5 | 37.7 | 71.0 |

| newsletter-hybrid | 2 | 44.9 | 18.7 | 71.0 |

| reviews-service | 9 | 40.0 | 15.0 | 79.7 |

| vertical-food | 5 | 39.3 | 20.0 | 74.0 |

| vertical-health | 4 | 37.6 | 20.0 | 61.0 |

| vertical-science | 5 | 32.7 | 15.0 | 67.0 |

| indie-longform | 8 | 30.5 | 18.0 | 68.3 |

| platform | 3 | 20.0 | 16.7 | 29.7 |

Three cohort-level patterns are worth naming:

Culture and entertainment publishers lead. Polygon, Eater, Vulture, and Variety all benefit from Vox Media's modern publishing stack and editorial schema discipline. The cohort median of 69 is the highest in the dataset.

Publishing platforms score worst. Substack, Medium, and Ghost form the bottom cohort with a median of 20. The platform itself constrains what individual publishers can configure. A publisher writing on Substack inherits Substack's agent-legibility profile, full stop. This is a structural finding: when you do not own the surface, you do not own the score.

Science and indie-longform underperform their reputations. Quanta Magazine (20), Smithsonian Magazine (15), and Mayo Clinic (20) score in the bottom decile despite being institutional brands with substantial editorial investment. The shortfall is technical, not editorial: thin or absent JSON-LD, no llms.txt, no AI bot directives.

Top 10: highest SARI scores

| Criteria | Score | Cohort |

|---|---|---|

| 01. Polygon | 81.0 | culture-entertainment |

| 02. Pocket-lint | 79.7 | reviews-service |

| 03. Seeking Alpha | 75.0 | business-finance |

| 04. The Verge | 75.0 | tech |

| 05. Eater | 74.0 | vertical-food |

| 06. Bloomberg | 73.0 | top-tier-news |

| 07. Vox | 73.0 | top-tier-news |

| 08. Marketing Brew | 71.0 | vertical-marketing |

| 09. Morning Brew | 71.0 | newsletter-hybrid |

| 10. ZDNet | 71.0 | tech |

Five of the top 10 are Vox Media properties (Polygon, The Verge, Eater, Vox, plus Vox-stack-influenced sister brands). Two are Morning Brew network properties (Marketing Brew, Morning Brew). Both publishing groups have made a deliberate technical investment in Article schema and robots.txt clarity. The top-of-leaderboard pattern is publishing-group quality, not category quality.

Bottom 10: lowest SARI scores

| Criteria | Score | Cohort |

|---|---|---|

| 94. Smithsonian Magazine | 15.0 | vertical-science |

| 95. Rtings | 15.0 | reviews-service |

| 96. Quartz | 15.0 | business-finance |

| 97. Substack | 16.7 | platform |

| 98. Longreads | 18.0 | indie-longform |

| 99. The Hustle | 18.7 | newsletter-hybrid |

| 100. Wall Street Journal | 20.0 | top-tier-news |

| 101. Quanta Magazine | 20.0 | vertical-science |

| 102. The Pudding | 20.0 | indie-longform |

| 103. Mayo Clinic | 20.0 | vertical-health |

The bottom 10 mixes platform-constrained publishers (Substack), aggressively paywalled publishers (Wall Street Journal), institutional brands underinvested in technical metadata (Smithsonian, Mayo Clinic), and design-led indie publications where the rendering choices crowd out structured data (The Pudding).

See the full leaderboard for all 103 publishers in the SARI Visual Audit infographic. Or open the dataset directly to filter and sort: sari-publisher-audit-dataset.csv.

Patterns that distinguish high scorers

The publishers in the top decile share four habits.

- Deliberate JSON-LD on every article. Not just the presence of a script tag. Schema-conformant @type, structured Person author, dual dates, publisher Organization with logo. The high scorers treat JSON-LD as an editorial responsibility, not a developer afterthought.

- llms.txt at the root. A small file. A clear signal. Six publishers had one. Five of those six are in the top half of the leaderboard. Per-point cost-per-effort, this is the cheapest improvement on the list.

- Stable, parameter-free canonical URLs. No session IDs. No UTM in canonical. The article URL today is the article URL next year. Citations survive, and search engines do not punish duplicate parameter variations.

- A real Person to Organization graph. Author profiles with their own URLs, those URLs returning their own JSON-LD with Person markup, the Organization linked to Wikipedia or LinkedIn via sameAs. The high scorers can be cited by person and brand, not just by domain. Only nine percent of the cohort has reached this baseline.

The publishers in the bottom decile share three failures, in this order: no JSON-LD on articles, no AI bot directives, no anchor-friendly headings. Fixing those three lifts a site from the bottom decile to the median in roughly a sprint of work.

What this means for your site

The job of every operator from now on is to be on the other end of every agent-relevant request. The reader's question arrives, the assistant retrieves and quotes someone, and the only contestable variable is whether you made it possible to be that someone. The five changes below are how you make it possible. They work for publishers, for e-commerce stores, for SaaS marketing sites, and for local services brands. Order is by point recovery.

- Add an llms.txt file. Ten points. One file. Half a day. 94% of the audited cohort is missing this.

- Audit your robots.txt for differentiated AI policy. Up to ten points if you do it deliberately. The fix is a paragraph of explicit Allow and Disallow rules across the bot taxonomy in this article. Only 8 of 103 publishers have this today.

- Make every article carry valid Article JSON-LD with structured author, both dates, and publisher logo. Up to twelve points if you are missing it today. Thirty-one percent of the audited articles have no JSON-LD at all.

- Add id attributes to your H2 and H3 elements. Five points and a real change in how your articles are quoted. Eighty-nine percent of the audited articles fail this.

- Connect your publisher Organization to its sameAs. Five points and a stronger identity graph. Ninety-one percent of the audited cohort has not done this.

Total: roughly forty points, achievable in a single sprint.

We opened with a promise: this article would tell you which changes move your site the most. Here is the answer in one line. llms.txt at the root, plus differentiated training-vs-citation rules in robots.txt, plus structured Article JSON-LD with author, dates, and publisher logo, plus heading anchor IDs, plus publisher sameAs. Forty points. One sprint. Every publisher in the top decile of our dataset has done four of these five.

Why we publish this rubric

At Automation Switch we are placing a bet. Agent legibility will be a primitive for how AI agents and human operators navigate the open web. Specification outlines, technical answers, trivial questions, every one of them flows through agents asking sites to surface what they know. The site that returns the cleanest answer wins the citation, and the citation is increasingly the click.

The article you are reading now is the playbook we are building with. We will retest our own site against this rubric in 90 days and publish what changed. The next research wave (e-commerce) will be the playbook for the same posture in product catalogs. Each subsequent wave widens the surface that agent-readable open-web content covers.

If we are right, the publishers, e-commerce stores, law firms, and SaaS marketing sites that internalise this work over the next twelve months will be the ones whose offers, content, services, and brand sit on the other end of every agent-relevant request. If we are wrong, we will have built a very thorough audit of the open web for nothing. The bet is asymmetric.

Methodology, dataset, and reproducibility

The full SARI methodology, the bot taxonomy with primary-source URLs, the audit script, and the per-site result JSONs are published in the article's companion repository. Three notes for researchers and reviewers:

- The audit weights binary signals over interpretive ones. We do not score "editorial AI-friendliness" or any other subjective dimension. Two auditors get the same score.

- Aggressive anti-scrape posture (paywalled publishers that block commercial scraping infrastructure) produces low-confidence scores in this audit. Of the 103 sites audited, 10 returned low-confidence scores because we could not reliably sample articles:

daringfireball.net,defector.com,espn.com,gizmodo.com,hbr.org,notebookcheck.net,searchengineland.com,theringer.com,time.com,webmd.com. These sites may be highly cited by AI assistants through direct contractual relationships with operators. Our rubric measures the open-web surface; their effective AI citation visibility may be higher than their SARI score implies. - The audited articles were sampled from each publisher's declared sitemaps. Three articles per site, 270 articles total across the 87 high-confidence sites and 6 medium-confidence sites.

Bot taxonomy sources (verified)

OpenAI: developers.openai.com/api/docs/bots

Anthropic: support.claude.com/en/articles/8896518

Perplexity: docs.perplexity.ai/guides/bots

Google: developers.google.com/crawling/docs/crawlers-fetchers/google-common-crawlers

Apple: support.apple.com/en-us/119829

Common Crawl: commoncrawl.org/ccbot

EU AI Act dates: digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai