You picked a framework. You built a prototype. Then production happened.

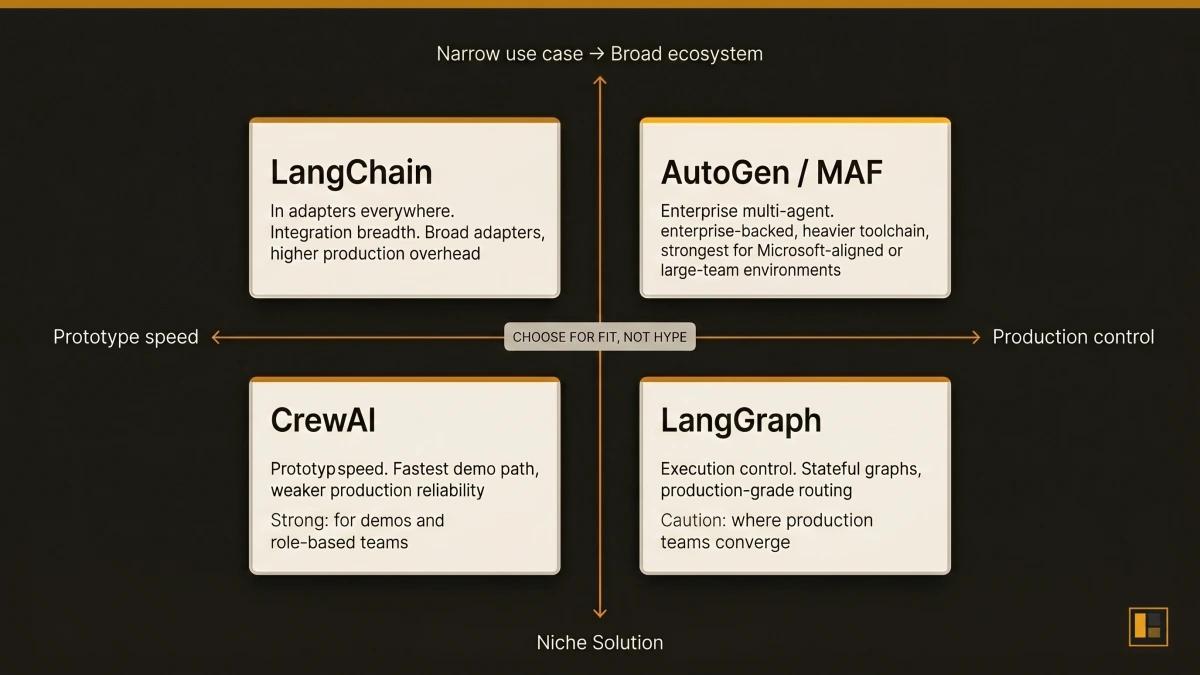

Every team building AI agents faces the same question: which framework deserves the next sprint? The ecosystem has four dominant contenders, and each one solves a fundamentally different problem.

LangChain gives you the widest library of integrations. CrewAI gives you multi-agent orchestration in minutes. AutoGen (now the Microsoft Agent Framework) gives you enterprise-grade infrastructure backed by one of the largest engineering organisations on the planet. LangGraph gives you stateful, deterministic execution control.

Most comparisons stop at feature lists. They show you what each framework can do. What matters more is what each framework costs you in production: the workarounds, the latency traps, the architectural ceilings you hit at scale.

We have direct experience with all four approaches. Our cold-email pipeline runs on CrewAI (six agents, real production traffic). Our RAG knowledge base runs on LangGraph, LangChain's stateful execution layer. And we have built with PydanticAI for structured-output agent prototypes. This article draws on that firsthand experience, validated against GitHub data, community reports, and documented caveats.

Here is the honest verdict.

LangChain 134,100 stars. CrewAI 48,400 stars. AutoGen 56,800 stars. LangGraph 29,700 stars.

The Decision That Shapes Everything

The most consequential choice goes deeper than the demo. Every framework demos well. CrewAI ships a multi-agent crew in under 50 lines of code. LangChain chains three tools together in a notebook. AutoGen coordinates two agents in a conversation loop.

The question is what happens six weeks later, when you trace a task chain at 2am, or when context fidelity degrades between agents in your crew, or when your LangChain abstraction adds 200ms of overhead to every invocation and you have 40 invocations per request.

The right framework depends on three things: what your agents actually do, how much control you need over execution, and how much production infrastructure you are willing to maintain. Everything else is secondary.

LangChain: The Integration Layer

GitHub: 134,100 stars | 279,000+ dependents | MIT licence

Best for: Teams that need access to the broadest ecosystem of LLM providers, vector stores, and tool integrations.

LangChain's strength is breadth. It supports every major LLM provider (OpenAI, Anthropic, Google, Ollama, and dozens more), every major vector store, and has the largest third-party integration library in the agent ecosystem. If you need to connect an agent to an obscure data source, LangChain almost certainly has a community adapter.

The trade-off is abstraction weight. LangChain wraps LLM calls, prompt templates, output parsers, and tool invocations in layers that add clarity in tutorials and overhead in production. Teams consistently report that debugging requires navigating three or four abstraction layers before reaching the actual API call.

In our RAG pipeline, we use langchain_core for prompt templates and langchain-google-genai for Gemini invocations. The prompt template abstraction (ChatPromptTemplate) genuinely earns its place: it makes prompt versioning clean and testable. We keep our LangChain surface area deliberately small: just the prompts and the LLM adapters. The orchestration layer is LangGraph, and the retrieval layer is custom.

When LangChain Fits

LangChain is the right choice when your primary constraint is integration breadth. If you are connecting agents to multiple LLM providers, switching between models based on cost or latency, or working with a vector store that only has a LangChain adapter, the ecosystem pays for itself.

Where LangChain Meets Its Limits

LangChain reveals its trade-offs when you need fine-grained control over execution. The abstraction layers that make demos clean also add complexity to production debugging. The community has documented this pattern repeatedly. A Reddit thread on r/AI_Agents from early 2026 captured the practitioner consensus: "LangChain for integrations, LangGraph for production."

- Widest integration ecosystem (279,000+ dependents)

- Every major LLM provider supported

- Active community with rapid adapter development

- Partial adoption viable (use just prompts + LLM adapters)

- Abstraction layers add debugging complexity

- Orchestration overhead compounds across multi-step chains

- Testing requires mocking through multiple wrapper layers

- Learning curve steepens at production scale

CrewAI: The Prototyping Engine

GitHub: 48,400 stars | 39M+ PyPI downloads | MIT licence

Best for: Teams that need a working multi-agent prototype in hours, with role-based task delegation.

CrewAI's pitch is speed-to-prototype. Define agents with roles, assign tasks, wire them into a crew, and run. A six-agent cold-email pipeline, from business analyst to quality reviewer, ships in under 200 lines of configuration.

We run exactly this setup in production. Our cold-email tool defines six agents: a Business Analyst, an Offer Research Analyst (with Firecrawl integration for scraping sender materials), a Business Portfolio Analyst, a Customer Insight Analyst, a Cold Email Generator, and a Cold Email Quality Reviewer.

The speed is real. Getting from "idea" to "working crew" takes a single afternoon. Production surfaces trade-offs that only appear at scale.

Sequential Execution in Practice

CrewAI's process='sequential' flag promises that tasks execute in order. In practice, the execution model has documented caveats. GitHub Issue #2092 reports tasks failing to execute in sequential mode. Issue #2129 highlights gaps in parallel flow execution support.

In our cold-email pipeline, we built custom solutions for three specific integration points:

- Tool compatibility layers. The Firecrawl tool integration required a custom compatibility wrapper because the built-in CrewAI Firecrawl tool hit library incompatibilities.

- Output format variability. CrewAI supports Pydantic model outputs via output_json. We switched to plain string outputs after serialisation proved inconsistent.

- Token budget management. We built a custom build_prompt_budget_snapshot function that calculates risk levels for context overflow.

- Fastest prototype-to-demo cycle in the ecosystem

- Role-based mental model matches how teams think about delegation

- 39M+ PyPI downloads with active community

- CrewAI Cloud offers managed execution

- Sequential execution carries documented consistency caveats (GitHub #2092)

- Output format validation (output_json) behaves inconsistently

- Tool integrations require compatibility wrappers

- Token management left entirely to the developer

AutoGen / Microsoft Agent Framework: The Enterprise Play

GitHub: 56,800 stars | GA April 3, 2026 | CC-BY-4.0 docs

Best for: Enterprise teams that need multi-agent orchestration with Microsoft ecosystem integration, A2A protocol support, and corporate backing.

AutoGen underwent a significant transformation in April 2026. Microsoft shipped the Microsoft Agent Framework (MAF) 1.0, unifying Semantic Kernel and AutoGen into a single SDK for .NET and Python. The original AutoGen repository is now in maintenance mode.

MAF 1.0 ships with MCP support built in: agents can dynamically discover and invoke tools via MCP servers. A2A (Agent-to-Agent) protocol support is announced and imminent, though it was still "coming soon" at the time of the GA release.

- Microsoft backing with enterprise support

- MCP support shipped in 1.0 GA

- .NET and Python parity (rare in this ecosystem)

- Built-in agent communication patterns (pub/sub, event-driven)

- Enterprise complexity for small-team use cases

- AutoGen-to-MAF transition introduces naming complexity across docs and community

- A2A protocol support announced but still pending GA

- Heavier toolchain than LangGraph or CrewAI

LangGraph: Where Production Actually Lands

GitHub: 29,700 stars | 37,800+ dependents | MIT licence

Best for: Teams that need stateful, deterministic agent execution with full control over the execution graph.

LangGraph keeps appearing as the production convergence point. Teams start with LangChain for integrations, CrewAI for prototyping, or AutoGen for enterprise requirements. When they hit production scale, many migrate to LangGraph for execution control.

Our RAG pipeline is a direct example. We run a 7-node LangGraph StateGraph that implements iterative query refinement:

- Rewrite the user's query for better retrieval

- Search the knowledge base (vector + BM25 + graph traversal)

- Rerank results by relevance

- Evaluate whether the results answer the question

- Refine the query if the answer is insufficient (loop back to step 1)

- Answer with citations when the evaluation passes

- Best-effort synthesis when max iterations are reached

The state management pattern uses TypedDict with Annotated fields to accumulate reasoning traces across iterations. Conditional edges route execution based on evaluation results. The entire graph is deterministic: given the same state, it always takes the same path.

For scored reviews of all four frameworks and every major alternative, browse the Agent Frameworks Directory.

The Comparison

| Criteria | LangChain | CrewAI | AutoGen/MAF | LangGraph |

|---|---|---|---|---|

| Primary Strength | Integration breadth | Prototype speed | Enterprise infrastructure | Execution control |

| Stars | 134,100 | 48,400 | 56,800 | 29,700 |

| Licence | MIT | MIT | CC-BY-4.0 (docs) | MIT |

| MCP Support | Yes (adapters) | Yes (native in 1.0) | Via LangChain | |

| Language | Python, JS | Python | Python, .NET | Python, JS |

| State Management | Manual | Framework-managed | Framework-managed | TypedDict + Annotated |

| Execution Model | Sequential chains | Role-based delegation | Multi-agent conversation | Directed graph |

| Production Debugging | Multi-layer navigation | Basic trace tooling | Enterprise logging | Full reasoning trace |

| Time to Prototype | Hours | Minutes | Days | Hours |

| Time to Production | Weeks | Weeks (with workarounds) | Weeks | Days (if you know graphs) |

| Best Audience | Integration-heavy teams | Prototype-first teams | Enterprise / Microsoft shops | Production engineering teams |

The Decision Framework

Choose LangChain if your agents connect to many external systems and you need the broadest adapter ecosystem.

Choose CrewAI if you need a working multi-agent demo this week and your use case fits a role-based delegation model.

Choose AutoGen/MAF if you operate in the Microsoft ecosystem, need .NET support, or require enterprise backing.

Choose LangGraph if you need production-grade execution control, deterministic graph routing, and full observability.

The pattern we see most often: Teams that start with CrewAI for prototyping and migrate to LangGraph for production, keeping LangChain adapters for LLM provider flexibility. This is exactly the stack we run.

What We Actually Run

At Scaletific, we build the governance layer and platform infrastructure that engineering teams use when scaling with AI agents. These frameworks power real production workloads across our delivery stack:

Cold-email pipeline (CrewAI): Six agents, sequential delegation, real client traffic. Works, and required a custom Firecrawl wrapper, manual token budgeting, and post-hoc output validation because output_json proved inconsistent.

RAG knowledge base (LangGraph + LangChain): Seven-node StateGraph with iterative refinement, hybrid retrieval (vector + BM25 + Neo4j graph traversal), and Graphiti temporal memory for cross-session context.

Structured-output prototypes (PydanticAI): Type-safe agent definitions with Pydantic's dependency injection pattern. The type safety and Logfire observability integration are compelling for teams that prioritise structured outputs and runtime validation.

For the foundational guide to agent architecture, read What Are AI Agents and How Do They Work.

Once you narrow the choice to LangGraph or PydanticAI, the next decision is between those two. See the PydanticAI versus LangGraph deep-dive. For the production patterns either framework has to support once it ships, see AI agents in production patterns that work.