Two frameworks. Two production philosophies. One is built by the team that defined Python's type system. The other is built by the team that defined LLM orchestration. The right choice depends on what you believe production agents actually need.

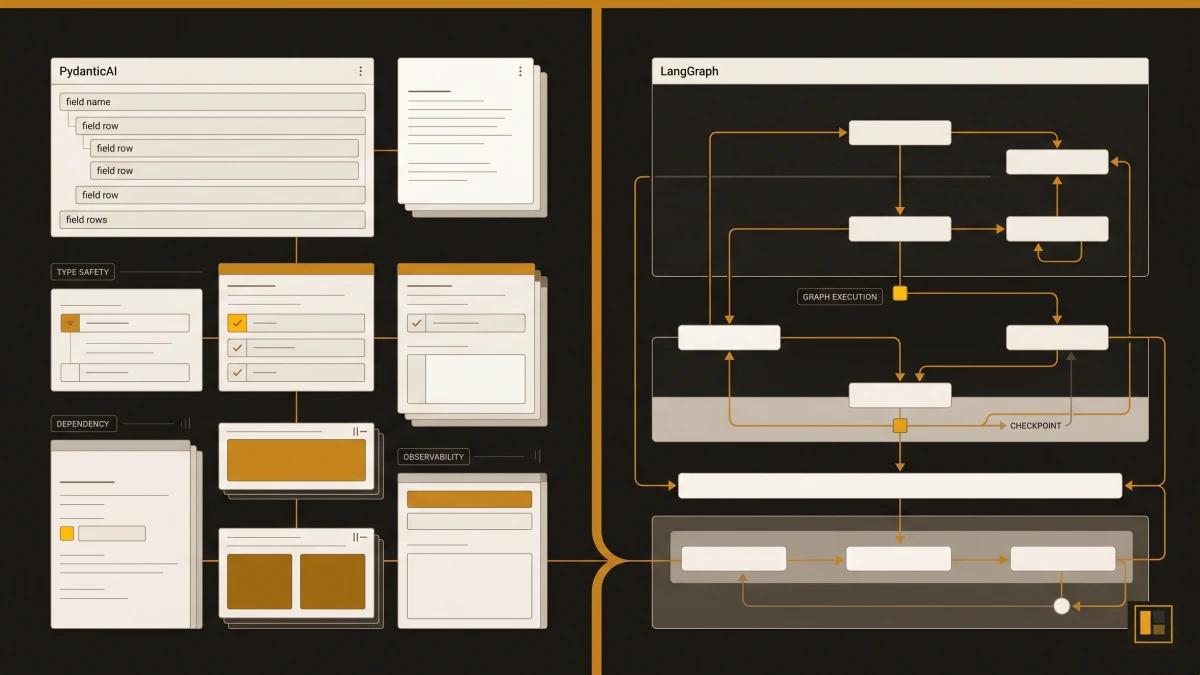

PydanticAI and LangGraph solve the same problem from opposite ends. PydanticAI starts with type safety: every agent input, output, and dependency is validated at runtime through Pydantic's type system. LangGraph starts with execution control: every agent decision is a node in a directed graph with deterministic routing and full state management.

Both frameworks have earned production credibility. PydanticAI hit v1.0 in September 2025 and ships native MCP support, A2A protocol integration, and Logfire observability out of the box. LangGraph has 29,900 GitHub stars, 38,200+ dependents, and has become the default execution layer for teams that outgrow simple chain orchestration.

Most comparisons frame this as a head-to-head contest. That framing misses the point. These are different bets on what makes agents reliable in production. Type safety and structured validation (PydanticAI) versus stateful determinism and graph-based execution control (LangGraph). Understanding which bet matches your architecture is the decision that matters.

We have built with both. Our RAG knowledge base runs on LangGraph (7-node StateGraph with iterative refinement). Our structured-output agent prototypes use PydanticAI (type-safe agents with dependency injection). This article draws on that firsthand experience.

PydanticAI: 16,500+ stars, v1.0+ stable. LangGraph: 29,900 stars. Both MIT licensed.

The Pain Point Both Frameworks Address

Every team that has built an agent past the prototype stage has hit the same wall: the agent works in testing and breaks in production. The inputs change shape. The LLM returns unexpected formats. A tool call fails silently. The execution path takes a branch entirely outside your test coverage, and the debugging story is "read the logs and guess."

PydanticAI and LangGraph both exist because this wall is real. They just attack it from different angles.

PydanticAI's answer: make the contracts explicit. If every agent input, output, and dependency is typed and validated, the failure surface shrinks. When something breaks, the type system tells you exactly what went wrong and where.

LangGraph's answer: make the execution visible. If every agent decision is a node in a graph with conditional edges, you can trace exactly which path execution took, what state it carried, and where it diverged from expectations.

PydanticAI: The Type Safety Bet

GitHub: 16,500+ stars | MIT licence | v1.0+ (stable since September 2025)

Best for: Python-first teams that prioritise structured outputs, runtime validation, and integrated observability.

PydanticAI brings Pydantic's philosophy to agent development: define the shape of your data, and the framework validates everything at runtime. Agents declare their input types, output types, and dependencies through Python type annotations. The framework enforces these contracts on every invocation.

Structured Outputs as a First-Class Concept

In our medical triage prototype, the agent returns a TriageOutput with three typed fields: response_text (str), escalate (bool), and urgency (int, 1-5). The framework guarantees this shape on every response. If the LLM returns malformed data, the validation catches it before it reaches your application code. Compare this to frameworks where output parsing is an afterthought: you build the parser, you handle the exceptions, you write the retry logic.

Dependency Injection Built In

PydanticAI agents accept a deps_type parameter that injects runtime dependencies (database connections, API clients, user context) through RunContext. In our prototype, the agent receives TriageDependencies containing a patient ID and database connection. The system prompt dynamically pulls patient context through these injected dependencies. This pattern eliminates global state and makes agents testable by design: swap the dependency for a mock and the agent runs identically.

Logfire Observability

Pydantic's Logfire platform provides AI-native observability built on OpenTelemetry. It traces full conversation flows, tracks token usage and cost, and inspects tool calls in real time. The integration is a single line: logfire.instrument_pydantic_ai(). For teams that require production observability, Logfire provides it out of the box, replacing custom tracing pipelines entirely.

Native MCP and A2A Support

PydanticAI agents can act as MCP clients (calling external tools via MCP servers), run inside MCP servers (exposing agent capabilities to other systems), and communicate via the A2A protocol through the FastA2A library. These integrations are documented, shipped features, and represent genuine interoperability.

Declarative Agent Definition

Agents can be defined in YAML or JSON via Agent.from_file('agent.yaml'), with a built-in capabilities system for web search, thinking, and MCP integration. This enables purely configuration-driven agent management.

When PydanticAI Creates Friction

PydanticAI creates friction when your agent workflow requires complex branching, loops, or stateful multi-step execution. The framework excels at single-agent, request-response patterns with structured outputs. When your agent needs to iteratively refine a query, branch based on intermediate results, or maintain state across multiple processing stages, you start building orchestration logic that the framework leaves to you.

- Type-safe inputs, outputs, and dependencies validated at runtime.

- Logfire observability with zero-config integration.

- Native MCP client and server support (shipped, documented).

- A2A protocol via FastA2A library.

- Declarative YAML/JSON agent definitions.

- Dependency injection makes agents testable by design.

- Complex multi-step workflows require external orchestration.

- Branching and looping logic is left to the developer.

- Ecosystem is smaller than LangChain/LangGraph (16.5k vs 29.9k stars).

- Community resources and tutorials are still growing relative to LangGraph.

LangGraph: The Stateful Execution Bet

GitHub: 29,900 stars | 38,200+ dependents | MIT licence

Best for: Teams that need deterministic, stateful agent execution with full control over branching, looping, and conditional routing.

LangGraph models agent workflows as directed graphs. Every processing step is a node. Every decision point is a conditional edge. The framework manages state across the entire execution, persists checkpoints, and provides a complete reasoning trace for every run.

Deterministic Execution Paths

In our RAG pipeline, a 7-node StateGraph handles iterative query refinement. The graph routes through rewrite, search, rerank, evaluate, refine, answer, and fail nodes. Conditional edges after the evaluate node route to one of three paths: answer (if the results satisfy the question), refine (if more iterations are available), or fail (if max iterations are reached). Given the same state, the graph always takes the same path. This determinism is what makes production debugging viable.

State Management as a Core Primitive

Our graph state is a TypedDict with Annotated fields:

class GraphState(TypedDict):

question: str

current_query: str

iteration: int

results: List[HybridResult]

all_results: List[HybridResult]

eval_result: dict

found_answer: bool

answer: str

reasoning_trace: Annotated[List[AgentStep], operator.add]The reasoning_trace field uses operator.add to accumulate steps across iterations. Every node appends its reasoning, and the final result contains the full trace: which query was rewritten to what, which results were returned, why the evaluator accepted or rejected them. When a production query fails, we can inspect every decision the agent made.

Persistence and Recovery

LangGraph supports checkpoint-based persistence (MemorySaver for development, PostgreSQL and Redis for production). State is saved at every super-step, organised into threads. If a node fails, the graph resumes from the last checkpoint, skipping already-completed nodes. This fault tolerance is infrastructure that most teams otherwise build from scratch.

Streaming Built In

LangGraph streams intermediate results as nodes complete, which enables real-time UX updates. In our RAG pipeline, users see the search phase complete before the evaluation phase starts, which provides feedback on long-running queries.

When LangGraph Creates Friction

LangGraph creates friction when your agent is simple. A single-purpose agent that takes an input, calls an LLM, and returns a structured output finds graph-based orchestration purely overhead. The graph abstraction adds conceptual overhead (nodes, edges, state schemas, conditional routing) that is justified by complex workflows and wasted on simple ones.

- Deterministic graph execution with full reasoning trace.

- TypedDict state management with Annotated field accumulation.

- Checkpoint persistence (MemorySaver, PostgreSQL, Redis).

- Fault-tolerant recovery from node failures.

- Built-in streaming for real-time UX.

- Integrates cleanly with LangChain adapters (use what you need).

- Graph abstraction adds overhead for simple agent patterns.

- Steeper learning curve than type-annotation-based frameworks.

- State schema design requires upfront investment.

- Output validation is manual (the framework manages execution, you manage types).

The Comparison

| Criteria | PydanticAI | LangGraph |

|---|---|---|

| Core Philosophy | Type safety and structured validation | Stateful graph execution |

| GitHub Stars | 16,500+ | 29,900 |

| Licence | MIT | MIT |

| Current Version | v1.0+ (stable since Sep 2025) | Continuous release |

| MCP Support | Native (client + server) | Via LangChain adapters |

| A2A Support | Via FastA2A (shipped) | Through external integration |

| Observability | Logfire (built-in, OpenTelemetry) | LangSmith or custom tracing |

| State Management | Dependency injection (RunContext) | TypedDict + Annotated fields |

| Execution Model | Request-response with tools | Directed graph with conditional edges |

| Output Validation | Runtime type enforcement (Pydantic) | Manual (developer responsibility) |

| Persistence | Application-managed | Built-in checkpoints (Postgres, Redis) |

| Streaming | Application-managed | Built-in node-level streaming |

| Best For | Type-safe single agents, structured outputs | Multi-step workflows, iterative loops |

| Learning Curve | Low (Pydantic familiarity) | Medium-High (graph concepts) |

| Time to First Agent | Minutes | Hours |

| Time to Production Workflow | Days (simple) to Weeks (complex) | Days (if graph-literate) |

The Complementary Pattern

The most interesting insight from our own usage: PydanticAI and LangGraph are increasingly complementary.

A PydanticAI agent is an excellent building block for a LangGraph node. You define your agent with typed inputs, typed outputs, and dependency injection. Then you wire that agent into a LangGraph node that manages the execution flow, branching, and state accumulation around it.

The emerging production pattern: PydanticAI for the agent (type safety, structured outputs, Logfire observability) + LangGraph for the workflow (state management, conditional routing, persistence). Each framework handles what it does best. You validate data with Pydantic and orchestrate execution with graphs.

This is where the "vs" framing breaks down. The real question is which layer of your stack each framework belongs in:

- PydanticAI owns the agent layer: input validation, output typing, dependency injection, observability per agent.

- LangGraph owns the orchestration layer: execution flow, state management, branching logic, fault recovery across agents.

If your system has a single agent doing a single job, PydanticAI is sufficient on its own. If your system has multiple agents coordinating across a multi-step workflow, LangGraph orchestrates while PydanticAI validates. And if you are early in your agent journey, start with PydanticAI for the faster time-to-first-agent, then introduce LangGraph when workflow complexity demands it.

What We Actually Run

RAG knowledge base (LangGraph): Seven-node StateGraph with iterative query refinement. Nodes: rewrite, search, rerank, evaluate, refine, answer, fail. Conditional edges route based on evaluation results. State accumulates reasoning traces via Annotated[List[AgentStep], operator.add]. Hybrid retrieval combines vector similarity (ChromaDB), BM25 (sparse), and graph traversal (Neo4j). Graphiti temporal memory provides cross-session context. LangChain provides prompt templates and LLM adapters. LangGraph provides the execution control.

Structured-output prototype (PydanticAI): Medical triage agent with TriageOutput (response_text, escalate, urgency). Dependency injection via TriageDependencies (patient_id, db connection). Dynamic system prompt that pulls patient context through injected dependencies. Running on Ollama (llama3.2) locally. Type safety catches malformed LLM outputs before they reach application code.

The lesson: LangGraph earns its complexity in multi-step, iterative workflows where you need full execution visibility. PydanticAI earns its simplicity in well-defined agent tasks where type safety and structured outputs are the priority. Both have a place in a production agent stack.

The Decision Framework

Choose PydanticAI if you are a Python-first team that values type safety, you need structured outputs with runtime validation, your agent pattern is primarily request-response with tools, and you want built-in observability via Logfire. PydanticAI is also the right starting point if you are early in your agent journey and want the fastest path to a production-quality single agent.

Choose LangGraph if your workflow involves multiple processing stages with branching and looping, you need deterministic execution with full reasoning traces, you require persistence and fault recovery, and your team is comfortable with graph-based programming models. LangGraph is the right choice when workflow complexity is the primary challenge.

Choose both if you are building a production system where individual agents need type safety (PydanticAI) and the workflow connecting them needs execution control (LangGraph). This complementary pattern is where the ecosystem is heading.

PydanticAI and LangGraph are two answers to the same question. For the broader survey across every major framework, see the broader comparison across LangChain, CrewAI, AutoGen, and LangGraph. For the patterns these frameworks have to support once they hit production, see AI agents in production patterns that work.