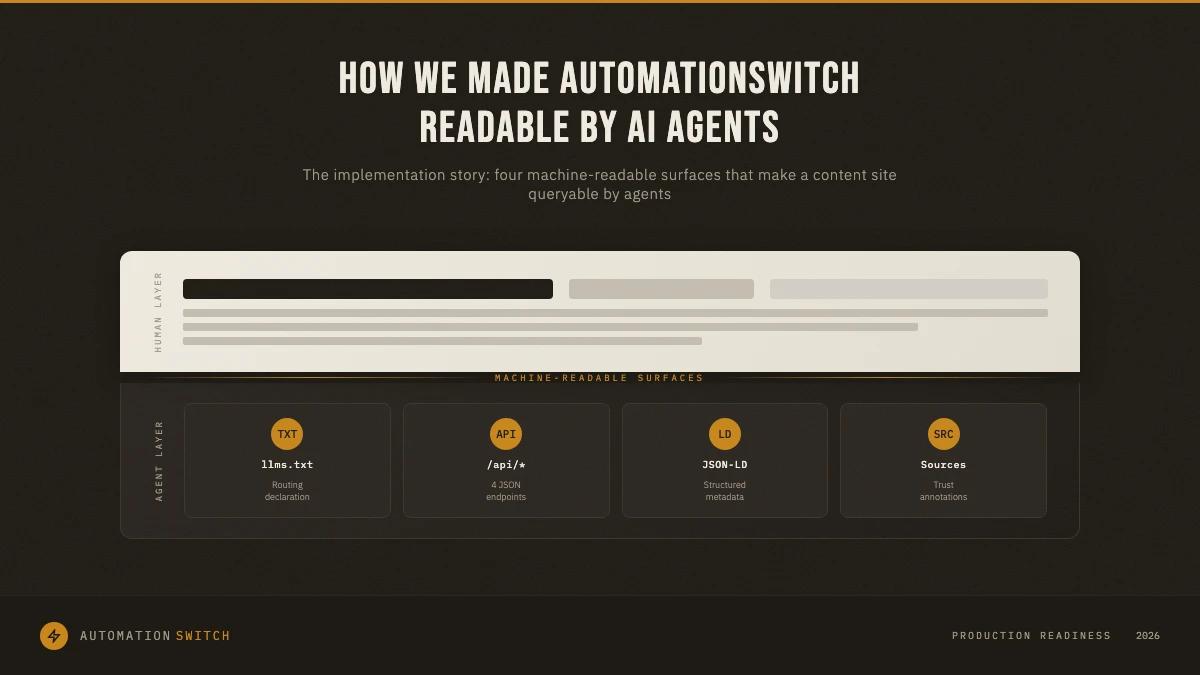

Most writing about the agentic web is theoretical. Analysts predict what will happen when agents browse, select tools, and act on behalf of users. We skipped the predictions and built it. This is the implementation story: what we built on AutomationSwitch to make the site readable, queryable, and useful to AI agents, what each surface does, and what an agent actually receives when it calls our API.

Introduction

AutomationSwitch indexes MCP directories, agent frameworks, and skill libraries for builders working with AI agents and workflow automation. The editorial content is written for humans. But a growing share of the audience arriving at the site is automated: coding agents checking MCP server availability, orchestrators comparing tool capabilities, LLM retrieval pipelines pulling structured data for user queries.

The standard response to this shift is to publish a `robots.txt` or add some JSON-LD and call it done. We went further. Over three weeks in April 2026, we built a full machine-readable discovery layer across four surfaces: a `llms.txt` declaration, four public JSON APIs, structured metadata on every major page, and a source-level trust annotation system that maps each citation to the specific claim it supports.

This article walks through each surface, explains the reasoning behind the architecture, and shows what an agent actually sees when it queries AutomationSwitch programmatically.

What "agent-readable" actually means

A site can be crawlable without being agent-readable. Search engine crawlers index HTML and extract text. Agents need more. They need structured answers to specific questions: what MCP directories exist, which ones are free, which support Claude Desktop, what the editorial verdict is. They need this data in a format they can parse without scraping, and they need trust signals so they can assess source quality before passing the answer to a user.

Agent-readable means three things in practice:

- Declared identity. The agent knows what the site is and what it publishes, before it reads a single page. This is the job of `llms.txt`.

- Structured, queryable data. The agent can retrieve specific records (MCP directories, frameworks, skill sources) via a JSON API, with normalised fields, typing, and freshness timestamps. No HTML parsing required.

- Traceable trust. Every editorial claim, every price point, every benchmark in an article is linked to a verifiable source through a structured annotation that agents can read and evaluate.

We built all three.

- 01Declare identity

Use llms.txt to establish what the site publishes and route agents toward the structured interfaces instead of the HTML listing pages.

- 02Expose queryable records

Publish read-only JSON endpoints for directories, frameworks, skill sources, and audits so agents can retrieve typed records without scraping prose.

- 03Render page semantics

Ship Dataset, ItemList, Review, and CollectionPage JSON-LD so each hub and framework page communicates its meaning even before an API call.

- 04Map claims to sources

Attach structured articleSources entries with supports annotations so every benchmark and pricing statement has a machine-readable trust chain.

Surface 1: llms.txt, the site identity declaration

The `llms.txt` convention (originated at fast.ai, adopted by Anthropic, Vercel, Cloudflare, and others) serves a simple purpose: tell LLMs and agents what your site is and how to use it. It sits at the root of the domain, plain markdown, readable by any HTTP client.

Our `llms.txt` at `automationswitch.com/llms.txt` declares:

- 01What AutomationSwitch is

An editorial intelligence layer for the automation and AI agent economy. The opening paragraph establishes scope and editorial position so agents can assess relevance before making any further requests.

- 02What we publish

Every content surface is listed with its URL: insights organised by pillar, the MCP Directory Hub, the Agent Frameworks Directory, the Skills Hub, tools index, glossary, and self-serve audits.

- 03How to use the site as an agent

Call `/api/mcp-directories`, `/api/agent-frameworks`, `/api/skill-sources`, and `/api/audits` for structured answers. Do not scrape the HTML listing pages.

- 04Data freshness signals

MCP directory counts are refreshed weekly via Firecrawl, article sources are verified at research and publish time, and every collection response includes an `updatedAt` timestamp.

The key design choice: `llms.txt` is a routing document, not a data document. It tells agents where to look, not what the answers are. The answers live in the APIs.

Why llms.txt alone is insufficient

A `llms.txt` file helps agents understand your site at a high level. It does not give them structured, filterable, machine-parseable data. An agent that reads our `llms.txt` knows that AutomationSwitch indexes MCP directories. It still cannot answer the question "which MCP directories are free and support Claude Desktop" without either scraping HTML or calling a structured endpoint.

This is why `llms.txt` was surface one, not the only surface.

Treat llms.txt as the routing layer. Keep the answers in stable JSON endpoints and use the declaration file to send agents to the right interface.

The machine-readable layer covers MCP directories, agent frameworks, skill sources, and audits with collection-level freshness metadata.

Surface 2: Four public JSON APIs

The core of the machine-readable layer is four read-only API endpoints that return the same data powering the site's editorial pages, projected into a clean JSON shape for programmatic consumption.

GET /api/mcp-directories

Returns every editorially scored MCP directory record. Each entry includes:

name: identity field

slug: canonical record slug

url: canonical record URL

directoryType: aggregator | marketplace | registry | curated-list

editorialScore: 1-5 score assigned by the editorial team

serverCount: last verified count of indexed MCP servers

monetization: human-readable pricing string

pricingTier: free | freemium | paid

focus:

- discovery

- install

- hosting

- registry

clientSupport: MCP clients the directory supports

verdict: editorial summaryThe response wraps all records in a `{ count, updatedAt, directories[] }` envelope so agents know how many records exist and when the data was last refreshed.

GET /api/agent-frameworks

Same pattern, applied to agent frameworks. Returns records for LangGraph, LangChain, PydanticAI, CrewAI, AutoGen, LlamaIndex Workflows, Haystack, and Agno. Each record includes language support, MCP compatibility flag, hosting model, editorial score, verdict, strengths, weaknesses, and latest version.

GET /api/skill-sources

Indexes SKILL.md file sources for AI coding agents. Each record includes source type, platform support (Claude Code, Cursor, Copilot, Gemini CLI, Aider, Windsurf), domain focus, skill count, install command, and featured flag.

GET /api/audits

Returns metadata for self-serve audit tools, including scoring dimensions, outputs, persona targets, and an `interest_count` field that reports how many users have registered interest for coming-soon audits. This field acts as a demand signal for agents evaluating which audits have traction.

Shared infrastructure across all four endpoints

Every API route follows the same operational pattern:

- ISR caching. One-hour revalidation with 24-hour stale-while-revalidate. Agents get fast responses; the data stays fresh without hammering Sanity on every request.

- Rate limiting. 60 requests per 60 seconds per IP. The `RateLimit-Policy` header is included in every response. Agents that exceed the limit receive a `429` with a `Retry-After` header.

- Field projection. The Sanity query selects only the fields needed for the API response. Internal fields (draft status, editor notes, Sanity document IDs) are never exposed.

- User-Agent logging. Every request is logged with its `User-Agent` string and execution time for traffic analysis.

The design principle: these endpoints return the editorial layer's data, not a separate dataset. The API and the website are views on the same Sanity content lake. When the editorial team updates a directory score, the API reflects it on the next revalidation cycle.

Surface 3: Structured metadata on every page

JSON-LD structured data on the page level gives search engines and agents another way to discover and interpret what AutomationSwitch publishes, without calling the API.

We implemented four schema types:

SoftwareApplication with `Review` on individual framework pages (`/agentic-ai/frameworks/[slug]`). Each framework review carries a `reviewRating` (1 to 5), `itemReviewed` (the framework as a `SoftwareApplication`), and `reviewBody` (the editorial verdict). Search engines surface this as rich results. Agents extract it as structured trust data.

All JSON-LD is server-rendered during page generation. There is no client-side hydration dependency.

{

"@type": "Dataset",

"targets": ["/mcp", "/skills", "/agentic-ai/frameworks", "/audits"],

"distribution": {

"@type": "DataDownload",

"encodingFormat": "application/json",

"contentUrl": "https://automationswitch.com/api/..."

}

}

{

"@type": "ItemList",

"target": "/skills",

"fields": ["position", "url", "name", "description"]

}

{

"@type": "SoftwareApplication",

"target": "/agentic-ai/frameworks/[slug]",

"withReview": {

"@type": "Review",

"reviewRating": "1-5",

"reviewBody": "editorial verdict"

}

}

{

"@type": "CollectionPage",

"targets": ["/mcp", "/skills", "/agentic-ai/frameworks", "/audits"],

"publisher": "Automation Switch"

}Surface 4: The articleSources trust layer

The most distinctive piece of the implementation is the `articleSources[]` system. Every published article on AutomationSwitch carries a structured source list, rendered as a collapsible panel below the FAQ section and stored in Sanity as an array of typed objects.

Each source entry has five fields:

title: Human-readable source name

url: Canonical URL of the source

publisher: Named publisher (fallback to domain extraction)

sourceType: official | research | tool | community | blog

supports: The specific claim, price, or benchmark this source backsThe `supports` field is the trust signal that matters most to agents. It creates a direct, verifiable link between an editorial claim in the article and the external source that backs it. An agent reading the article's structured data can check: "the article says PulseMCP indexes 11,180 servers. Which source supports this claim?" The `supports` annotation answers that question without the agent needing to parse article prose.

Sources are collected during the research phase (often via Firecrawl automated extraction), verified by the author during drafting, and re-verified at publish time. The `sourceType` taxonomy allows agents to filter by credibility level: `official` documentation carries more weight than a `blog` post.

Why this matters for agent trust

Agents that retrieve information on behalf of users face a fundamental problem: they need to assess whether the source is credible and whether the claims are current. A raw article with no source attribution is a black box. An article with structured, typed, claim-mapped sources gives the agent a verifiable trust chain.

This system positions AutomationSwitch as a higher-trust source than sites that publish editorial content without traceable citations. For agents performing tool selection or comparison tasks, that trust differential compounds: they will prefer sources where they can verify claims over sources where they cannot.

The agent traffic classification layer

Building machine-readable surfaces only matters if you can measure whether agents are actually using them. We implemented agent traffic classification in the Next.js middleware layer before shipping the APIs.

The middleware inspects every inbound request's `User-Agent` string against a pattern list of known AI crawlers: ClaudeBot, GPTBot, PerplexityBot, Cohere-AI, Google-Extended, Anthropic-AI, and others. When a match is found, the middleware sets an `X-AS-Agent: 1` response header and logs the classification. This header lets downstream analytics filter agent traffic from human traffic without relying on third-party bot detection services.

The classification runs on every request except static assets, the Sanity studio, and API routes (which have their own logging). It adds negligible latency because the check is a set of substring matches on a string that's already in memory.

The key insight: instrument before you ship. If we had launched the APIs first and instrumented later, we would have missed the baseline period and had no way to measure the incremental impact of each new surface.

The MCP capability declaration

The final piece is `/.well-known/mcp.json`, a static file that declares AutomationSwitch's MCP server capabilities to any agent or client that checks the well-known path.

The file declares eight tools: `search_directories`, `get_directory`, `compare_directories`, `search_skills`, `get_skill_source`, `search_frameworks`, `get_framework`, and `get_audit`. Each tool is described with its purpose and parameters. The transport is HTTP (stateless JSON-RPC POST to `/api/mcp`).

This convention is still emerging. Few sites publish a `/.well-known/mcp.json`. The early-mover advantage is real: MCP clients that check this path will discover AutomationSwitch as a callable service before competitors recognise the pattern.

| Criteria | Primary job | What the agent gets |

|---|---|---|

| llms.txt | Route the agent to the right surfaces | Site identity, scope, and API pointers |

| Public JSON APIs | Return filterable answers without scraping | Typed records, enums, verdicts, and timestamps |

| JSON-LD on pages | Expose machine-readable page semantics | Dataset, ItemList, Review, and CollectionPage signals |

| articleSources | Map claims to evidence | A typed trust chain with a supports annotation |

| .well-known/mcp.json | Declare callable MCP capabilities | Tool names, transport, and parameter surface |

What we deferred and why

Three capabilities were explicitly deferred from Phase 1:

The full MCP server runtime. The `/.well-known/mcp.json` declares the tools, but the server-side handler that processes MCP JSON-RPC requests is Phase 2 work. The decision was to ship the REST APIs first, prove agent traffic exists, and then build the MCP server as a thin wrapper around the same data layer. Building the server before proving API adoption would have been premature infrastructure.

Intent-aware conversion routing. When an agent refers a user to AutomationSwitch, that user arrives with different intent than someone who found the site via search. An agent-referred user comparing two MCP directories should land on the comparison page, not the generic hub. This routing layer requires analytics data that does not yet exist at sufficient volume.

The verified badge programme. Directories and MCP servers that pass editorial review could receive a `verified: true` flag in the API. This creates a revenue line (verification as a paid service). The decision was to defer this until the API has organic agent traffic, because launching a paid trust programme before the trust layer is widely used would undermine the brand.

All three are scoped in ADR-0002 with clear triggers for when they move from deferred to active.

Lessons: what surprised us

llms.txt is a routing document, not a destination. The first draft of our `llms.txt` tried to include too much inline data. The right design is to declare what you publish and point to where the structured versions live. Keep it concise. Agents that need data will follow the pointers.

Normalised enums save agents from parsing prose. The `pricingTier` field (`free`, `freemium`, `paid`) alongside the human-readable `monetization` string was one of the highest-leverage decisions. Agents filtering by price can use the enum directly instead of parsing "Free / Open source (MIT)" with an LLM call. Every structured field that eliminates a parsing step makes your data more reliable for automated consumption.

The `supports` annotation is the trust moat. Most editorial sites publish sources as a reference list at the bottom of the article. The `supports` field, which maps each source to the specific claim it backs, is what makes the source list useful to agents. Without that mapping, the agent knows you cited a source but cannot determine which claim it supports without re-reading the article.

Instrument before you ship. The agent User-Agent classification in middleware was the first thing we deployed, before any API or structured data surface. This gave us a baseline of agent traffic hitting the HTML pages, so we could measure the incremental lift when the APIs went live.

ISR caching makes public APIs viable without infrastructure cost. Running a public API on a content site sounds operationally expensive. With Next.js ISR (one-hour revalidation, 24-hour stale-while-revalidate), the API serves cached responses for the vast majority of requests. Sanity is only queried once per hour per endpoint. The operational cost is effectively zero above what the site already pays for hosting.

`robots.txt` and `llms.txt` serve different audiences. Our `robots.txt` disallows `/api` paths to prevent search engine crawlers from indexing API responses as web pages. Our `llms.txt` explicitly directs agents to those same `/api` paths. This felt contradictory at first, but the logic is correct: search engines should index the HTML pages (where the editorial content lives), while agents should query the API (where the structured data lives).

Do not treat robots.txt and llms.txt as substitutes. Let search engines index the editorial HTML, keep raw API routes out of the search index, and point agents to those same APIs explicitly through llms.txt.

What comes next

Phase 2 is the AutomationSwitch MCP server: a live runtime that lets coding agents (Claude Code, Cursor, and any MCP-compatible client) query the directory, frameworks, skills, and audit data directly from inside their toolchain, without opening a browser. The eight tools are already defined. The data layer is already built. The server is a thin transport wrapper around the existing API.

Phase 3, once agent traffic reaches measurable volume, is the verified trust layer: a badge programme where directories and MCP servers that pass editorial review receive a machine-readable `verified: true` flag that agents can use as a quality signal.

The broader lesson is that agent readability is infrastructure work, not a marketing initiative. It requires structured data, normalised fields, declared identity, traceable trust, and traffic instrumentation. Sites that treat it as an afterthought (add some JSON-LD, publish a thin `llms.txt`) will be outperformed by sites that treat agents as a first-class audience with specific needs.

We built AutomationSwitch to serve two audiences simultaneously: human builders making automation decisions, and agents acting on their behalf. The implementation described in this article is how we made that operational.

Ready to make your site agent-readable?

The architecture described here is applicable to any content site that publishes structured, editorial data. If you want to evaluate where your site stands and what it would take to make it queryable by agents, book an automation audit. We will assess your current machine-readable surface, identify the gaps, and deliver a prioritised implementation plan.

The agent-readable layer rides on top of the rest of the stack. For the component library that structures every article on the site, see the 13-component library that structures every article. For the deployment platform underneath, see Vercel for infrastructure engineers.