Every AI coding assistant ships with a general-purpose instruction set. It can write code, answer questions, and follow prompts. What it lacks out of the box is domain knowledge: the specific steps, constraints, and quality standards your team has learned through repetition. Skills fill that gap. They are structured instruction files that turn a general-purpose assistant into a specialist for a defined task.

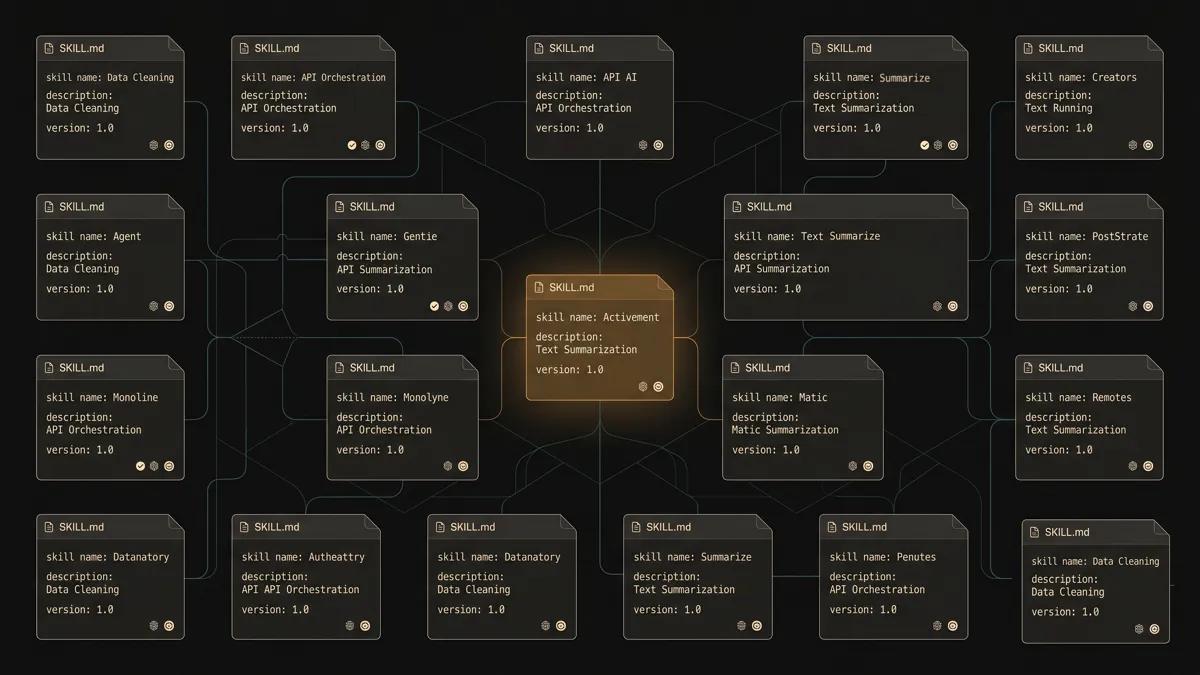

The Automation Switch Skills Hub is a public directory of 46 production skills organised across nine categories. Each skill follows the SKILL.md specification, a structured markdown format that any compatible AI coding assistant can read, interpret, and execute. The hub is the visible layer of an internal index we have been building, testing, and refining across hundreds of real publication and automation cycles.

This article explains why we built the index, how the skills are structured, what the current inventory covers, and where the hub is heading. If you work with AI coding assistants and want to move beyond one-off prompts, this is the reference document.

9 categories, version-controlled

Why We Needed an Internal Index

The skills library started as a practical response to a recurring problem. We were building content, automations, and tooling with AI coding assistants, and we kept rewriting the same instructions. Every new session started from scratch. The assistant had the capability, but it lacked the context of what we had already figured out.

The One Agent One Task Problem

Most teams use AI coding assistants for isolated tasks: write this function, fix this bug, draft this paragraph. Each interaction is self-contained. The assistant completes the task, the session ends, and the knowledge of how that task was done disappears.

This works for simple, one-off requests. It breaks down when you have repeatable workflows with specific quality standards. A content publication pipeline, for example, involves research, drafting, editorial review, image processing, SEO metadata, and CMS publication. Each step has constraints. Each constraint was learned through trial and error. Without a persistent record of those constraints, every new session repeats the same trial and error.

The cost compounds. A team running 20 publication cycles per month that spends 15 minutes per cycle re-establishing context loses five hours monthly to repetition. That is five hours of an operator re-explaining standards that the system should already know.

What a Skills-Based Architecture Changes

A skill is a persistent instruction set. Once written, it encodes the constraints, steps, and quality gates for a specific task. The assistant reads the skill at the start of a session and operates within its boundaries from the first interaction.

This changes the economics of AI-assisted work. Instead of paying the context-setting cost on every session, you pay it once when you write the skill. Every subsequent session starts at full capability. The assistant knows the output format, the validation steps, the naming conventions, and the error-handling patterns because those are encoded in the skill file.

The shift is from "instruct the assistant each time" to "maintain a library of instructions the assistant reads automatically." The library becomes an organisational asset: it captures operational knowledge in a format that both humans and machines can use.

How We Learned to Structure Skills

The current skill format is the result of iteration. The first version was a single markdown file with loose instructions. The current version is a structured specification with required sections, metadata frontmatter, and cross-references to other skills.

From One File to a Structured Index

The first skill we wrote was a content publication checklist. It listed the steps an assistant should follow when publishing an article: validate metadata, check image slots, confirm SEO fields, publish to the CMS. It worked, but only for that one task.

As we added more skills, patterns emerged. Every skill needed a trigger condition (when should the assistant use this skill?), a set of constraints (what rules apply?), and a validation step (how does the assistant confirm the output is correct?). We formalised those patterns into the SKILL.md specification.

The specification defines required sections: description, trigger conditions, constraints, steps, validation, and references. It also defines optional sections for examples, edge cases, and cross-references to related skills. The result is a format that is both human-readable and machine-parseable.

Skills That Call Other Skills

The most significant design decision was allowing skills to reference other skills. A content publication skill can reference an image processing skill, which references a quality validation skill. The assistant follows the chain automatically: it reads the publication skill, recognises the image processing reference, loads that skill, and applies both sets of constraints in sequence.

This is where the architecture creates compound value. A single skill is useful. A chain of skills that reference each other creates an automation pipeline that is greater than the sum of its parts. The article publication workflow, for example, chains five skills: brief generation, content drafting, image processing, SEO validation, and CMS publication. Each skill is independently useful. Together, they form a complete pipeline from idea to published article.

Skills reference other skills, enabling automation chains where a single trigger can produce a complete workflow. The article publication pipeline chains five skills in sequence: brief generation, content drafting, image processing, SEO validation, and CMS publication.

The Anatomy of a Skill

Every skill in the index follows the same structure. This consistency is what makes the library machine-readable: an AI coding assistant can parse any skill file and extract the information it needs to execute the task.

How Skills Are Structured

A SKILL.md file has a defined directory structure and a set of required sections. Here is the standard layout:

.claude/skills/

skill-name/

SKILL.md # Primary instruction file

examples/ # Optional: worked examples

templates/ # Optional: output templates

tests/ # Optional: validation scriptsThe SKILL.md file itself follows a structured format with YAML-style metadata at the top:

# Skill: Article Publication Pipeline

Description: End-to-end article publication from brief to live CMS entry.

Trigger: When the user asks to publish an article or create a publication script.

Category: Content and Editorial

Version: 2.1.0

Dependencies: image-processing, seo-validation, sanity-publication

## Constraints

- All images must be processed to WebP format before upload

- SEO metadata must include metaTitle, metaDescription, and structured FAQ

- Body content must use Portable Text block format

- Publication script must be idempotent (safe to re-run)

## Steps

1. Validate the article brief against the content policy

2. Process all images using the image-processing skill

3. Build the Portable Text body with enrichment components

4. Validate SEO fields using the seo-validation skill

5. Publish to Sanity using createOrReplace

6. Run post-publication verification query

## Validation

- Sanity document exists with correct _id

- bodySyncLocked is true

- All image slots are populated

- FAQ and articleSources arrays are populatedThe structure serves two audiences. Humans read it as documentation for the workflow. AI coding assistants parse it as an executable specification. The trigger condition tells the assistant when to activate the skill. The constraints define the boundaries. The steps define the sequence. The validation section defines the acceptance criteria.

Distributing the Internal Index

The internal index uses a three-tier distribution model:

- Repository-local skills live in the .claude/skills/ directory of each project. These are project-specific and travel with the codebase.

- Organisation-level skills live in a shared directory that multiple projects reference. These encode cross-project standards like coding conventions, commit message formats, and review checklists.

- The public hub is the curated subset of the index that we publish on the Automation Switch website. These are the skills with the broadest applicability, cleaned of internal references and documented for external use.

This tiered approach means each skill lives at the appropriate scope. A project-specific deployment script stays in the project. A universal editorial standard gets shared across all content projects. A general-purpose skill that works for any team gets published to the hub.

The skills work across multiple AI coding assistants. We use them with Claude Code, Cursor, and Windsurf depending on the task. For a scored comparison of these tools and 24 others, see the AI Coding Assistants Directory.

The Internal Index: Current Inventory

The index currently contains 46 skills across nine categories. Each category groups skills by domain. Below are two representative categories from the full inventory, followed by a summary of the remaining seven.

Content and Editorial

This is the largest category, covering the full content lifecycle from research through publication.

| Criteria | Skill | Function | Status |

|---|---|---|---|

| 1 | deep-research | Multi-source research with citation tracking | Production |

| 2 | content-research-writer | Collaborative drafting with real-time feedback | Production |

| 3 | article-component-writer | Portable Text body construction for Sanity | Production |

| 4 | component-strategy | Enrichment component selection and placement | Production |

| 5 | image-brief-generator | Structured image briefs per content policy | Production |

| 6 | image-capture | HTML template to WebP conversion via Playwright | Production |

| 7 | seo-page-builder | SEO-optimised static pages with JSON-LD | Production |

| 8 | taxonomy-enforcer | Validates articles against site taxonomy | Production |

| 9 | sanity-article-publication-workflow | End-to-end Sanity publication pipeline | Production |

Governance and Quality

These skills enforce standards across code, content, and operational workflows.

| Criteria | Skill | Function | Status |

|---|---|---|---|

| 1 | verification-standards | Honest reporting and evidence-based completion | Production |

| 2 | coding-standards | Universal standards for TypeScript, React, Node.js | Production |

| 3 | code-simplifier | Clarity and maintainability refactoring | Production |

| 4 | simplify | Reuse, quality, and efficiency review | Production |

Beyond Content: The Full Inventory

The remaining 34 skills span seven categories that cover the full development lifecycle. The largest concentrations are in design systems, backend architecture, and web scraping, each reflecting a domain where we found ourselves repeating the same patterns across engagements.

Web Scraping and Data (8 skills): The Firecrawl suite powers our research pipeline. Individual skills handle search, scrape, crawl, map, download, and agent-driven extraction. These are the most frequently chained skills in the index, typically triggered by the deep-research skill.

Design and Figma (8 skills): A complete design-to-code workflow. Includes reading from Figma, generating designs, building component libraries, creating design system rules, and translating designs into production code with 1:1 visual fidelity.

Backend and Architecture (9 skills): Covers API design, authentication patterns, Clean Architecture, Go concurrency, Python patterns and performance, and FastAPI templates. These skills encode the architectural standards we apply across client engagements.

Accessibility and Compliance (5 skills): WCAG compliance checking, colour contrast analysis, link purpose validation, and accessible refactoring. These run as quality gates before publication.

Frontend Development (5 skills): React composition patterns, Next.js performance optimisation from Vercel Engineering guidelines, React Native best practices, and advanced TypeScript types.

DevOps and Tooling (5 skills): MCP server construction, browser automation with Playwright, web app testing, and the meta-skill for creating new skills (skill-development).

AI and Agent (2 skills): The Claude API skill for building Anthropic SDK applications, and the AutomationSwitch MCP server skill for extending our own tool-calling infrastructure.

Skill Chaining in Practice

The article publication workflow demonstrates how skill chaining works in a real production environment. Publishing a single article on Automation Switch involves five skills working in sequence:

- The content-research-writer skill conducts research, structures the outline, and drafts the article body with citations and section-level feedback.

- The image-brief-generator skill produces structured image briefs that define each visual: dimensions, alt text, slot assignment, and brand guidelines.

- The image-capture skill converts HTML templates into production WebP images at the exact dimensions specified in the brief.

- The article-component-writer skill builds the Portable Text body with enrichment components (stat highlights, callout boxes, comparison tables, CTAs) placed according to the component-strategy skill.

- The sanity-article-publication-workflow skill uploads images to the Sanity CDN, constructs the full document with metadata and SEO fields, publishes via createOrReplace, and runs verification queries.

Each skill is independently documented and independently usable. The chain emerges from the references: the publication workflow skill lists the image-capture skill as a dependency, which in turn references the image-brief-generator. An operator can trigger the full chain or use any individual skill in isolation.

The total time from article brief to published, verified article using this chain is under 90 minutes. The same workflow done manually, including research, writing, image creation, metadata entry, and CMS configuration, typically takes six to eight hours.

The Economics of Reusable Skills

The cost argument for a skills library is straightforward. Writing a skill takes one to three hours. Using that skill saves 15 to 45 minutes per session. After a handful of uses, the skill has paid for itself. After dozens of uses, the return is asymmetric.

Here is how the economics compare across approaches:

| Criteria | Ad-Hoc Prompting | Skills Library |

|---|---|---|

| Context setup per session | 10 to 20 minutes | 0 minutes (auto-loaded) |

| Output consistency | Variable, depends on prompt quality | Consistent, defined by specification |

| Knowledge retention | Session-scoped, lost on close | Permanent, version-controlled |

| Onboarding new team members | Oral tradition, shadowing required | Read the skill library |

| Error rate on repeatable tasks | Higher, varies by session | Lower, validated by skill constraints |

| Upfront investment | Zero | 1 to 3 hours per skill |

| Break-even point | Immediate (zero investment) | 3 to 5 uses per skill |

The less obvious benefit is organisational. A skills library is a living operations manual. When a team member leaves, their knowledge stays in the skill files. When a new member joins, they read the library instead of asking colleagues for undocumented procedures. The skills are both executable and documentary.

What Is Next for the Hub and the Index

The hub is a snapshot of the current index. The index itself is growing, and the mechanisms for how it grows are changing.

Growing the Internal Index Automatically

Every skill in the current index was authored by hand: written by a human, tested against real workflows, and refined through production use. That process produces high-quality skills, and it is also the bottleneck. Quality depends on the number of people writing, which means the library grows linearly with team capacity.

The next phase changes that equation. We are building a pattern recognition layer into our governance portal that watches how agents and operators work. When the system identifies repeated behaviour, the same sequence of steps, the same tool chain, the same decision logic applied across multiple sessions, it flags the pattern as a candidate skill. A human reviews the candidate, validates the logic, and approves it for inclusion. The initial authoring step moves from manual to automated. The quality gate stays human.

This creates a feedback loop: skills encode knowledge for agents, agents use those skills to do work, the governance portal observes how that work happens, patterns emerge from the repetition, and those patterns become new skills. The library grows through deliberate engineering and through the natural rhythms of how we work.

Opening the Hub to the Community

The SKILL.md specification is open. Any team can write skills in the same format and structure them for the same agent platforms. The next step for the public Hub is a contribution workflow: a submission format, an editorial review process, and quality validation that allows external skills to join the directory with attribution.

A practitioner who has built a production-quality email sequencing skill, a CRM enrichment skill, or a reporting automation skill should be able to contribute it and get credit. The long-term vision is a marketplace where practitioners publish, discover, and build on each other's skills. The same economics that make internal skill reuse powerful apply at the ecosystem level: a skill built once can serve hundreds of agents with zero incremental effort from the creator.

The Automation Switch hub becomes the curated index. The community becomes the source of breadth. Both grow together.

For the broader context on the SKILL.md format, where it came from, how the ecosystem is evolving, and how different agent platforms implement it, see SKILL.md Files: The Agent Skills Directory.

Most of the skills in the hub above are built on the SKILL.md specification. For curated picks ready to install, see the 21 best SKILL.md files to install today. For the format itself and the broader ecosystem of agent skills, see the SKILL.md format and ecosystem.